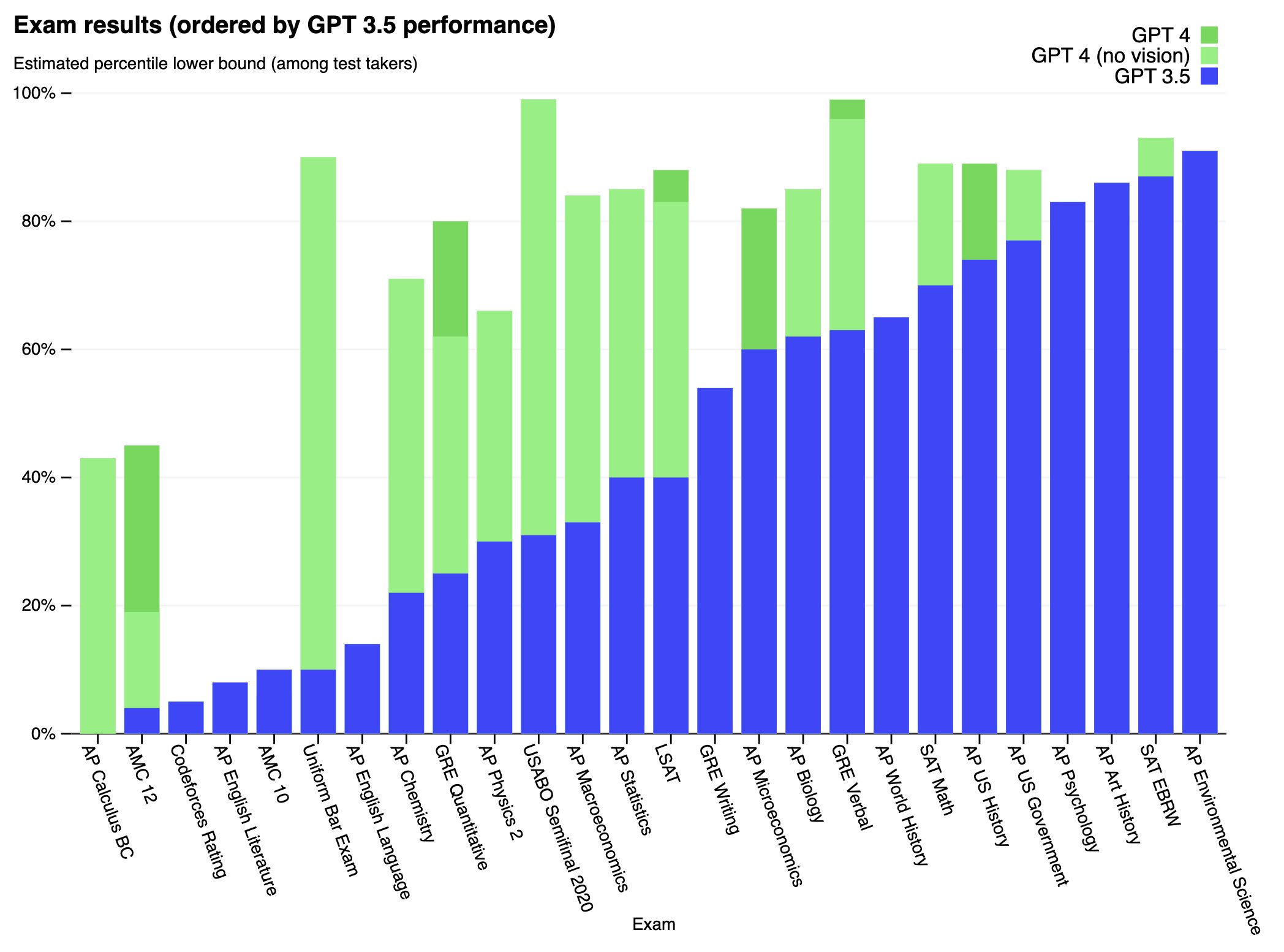

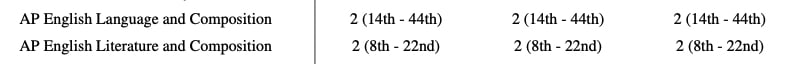

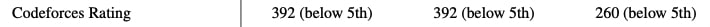

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

The model already had access to thousands of unwitting human subjects by the time ARC got access to it. Possibly for months. I don't actually know how long, probably it wasn't that long. But it's common practice at labs to let employees chat with the models pretty much as soon as they finish training, and even sooner actually (e.g. checkpoints part of the way through training) And it wasn't just employees who had access, there were various customers, Microsoft, etc.

ARC did not allow it to try to replicate itself on another server. That's a straightforward factual error about what happened. But even if they did, again, it wouldn't be that bad and in fact would be very good to test stuff out in a controlled monitored setting before it's too late and the system is deployed widely in a much less controlled less monitored way.

I emphasize again that the model was set to be deployed widely; if not for the red-teaming that ARC and various others internal and external to OpenAI did, we would have been flying completely blind into that deployment. Now maybe you think it's just obviously wrong to deploy such models, but that's a separate conversation and you should take it up with OpenAI, not ARC. ARC didn't make the choice to train or deploy GPT-4. And not just OpenAI of course -- the entire fricken AI industry.