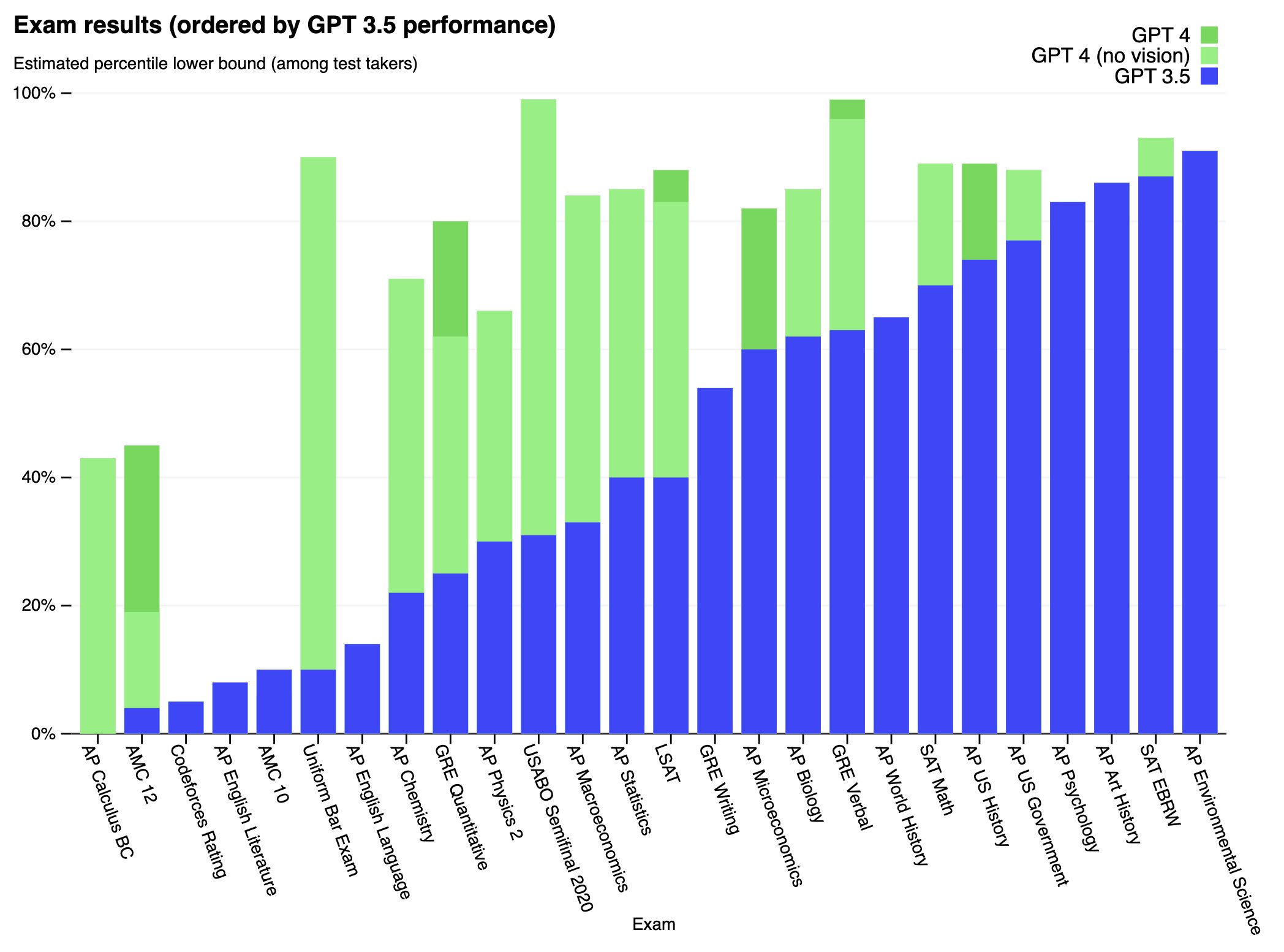

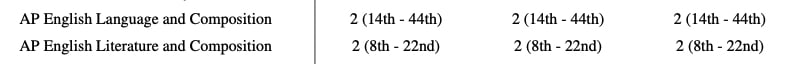

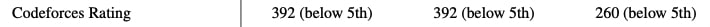

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

Can you verify that these tests were done with significant precautions? OpenAIs paper doesn’t give much detail in that regard. For example apparently the model had access to TaskRabbit and also attempted to “set up an open-source language model on a new server”. Were these tasks done on closed off airgapped machines or was the model really given free reign to contact unknowing human subjects and online servers?