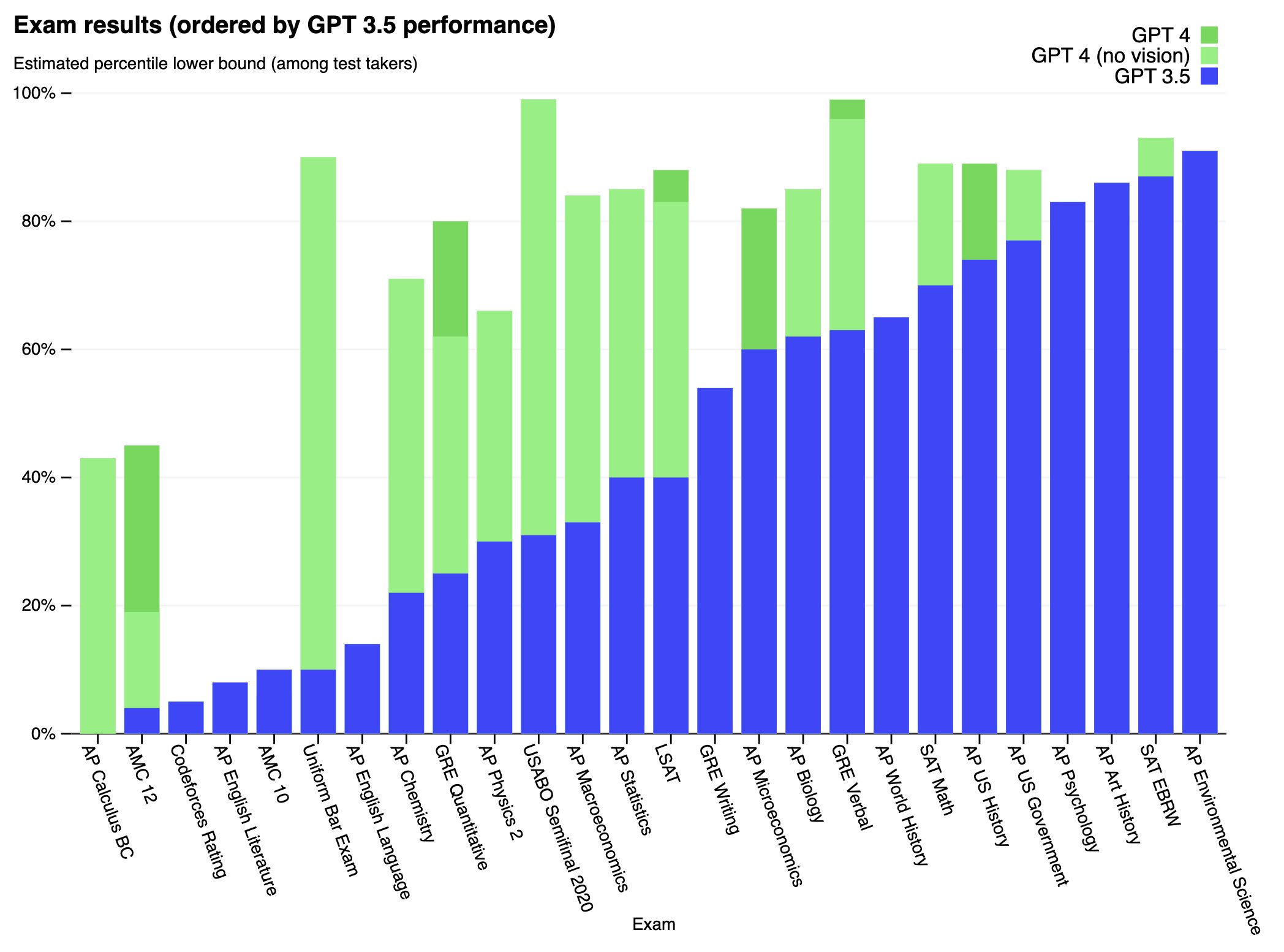

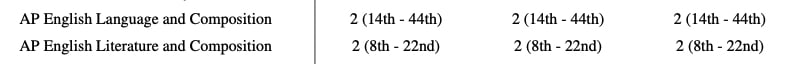

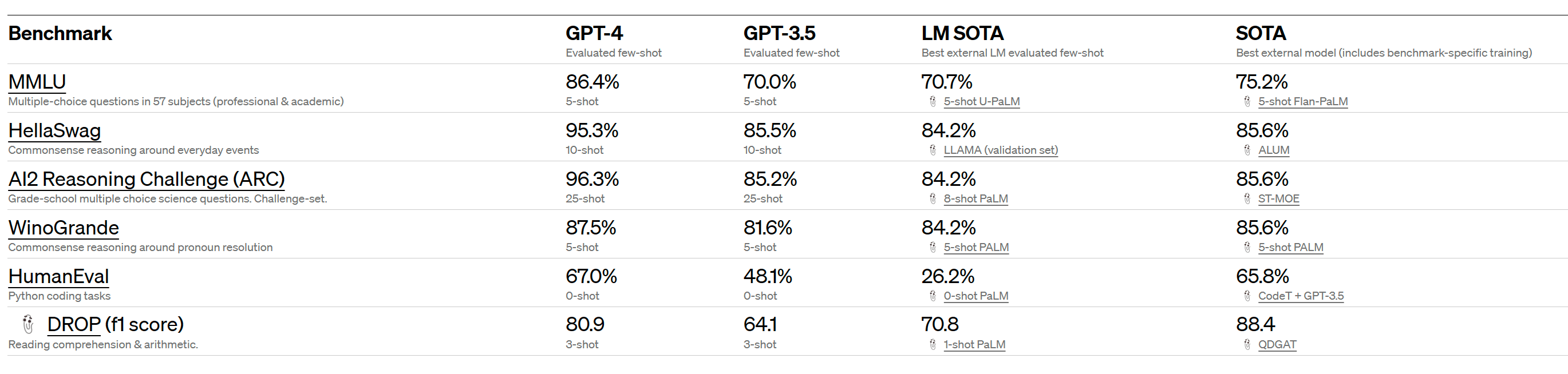

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

This is up from ~4k tokens for davinci-text-003 and gpt-3.5-turbo (ChatGPT). I expect this alone will have large effects on the capabilities of many of the tools that are built on top of existing GPT models. Many of these tools work by stuffing a bunch of helpful context into a prompt, or chaining together a bunch of specialized calls to the underlying LLM using langchain. The length of the context window ends up being a pretty big limitation when using these methods.