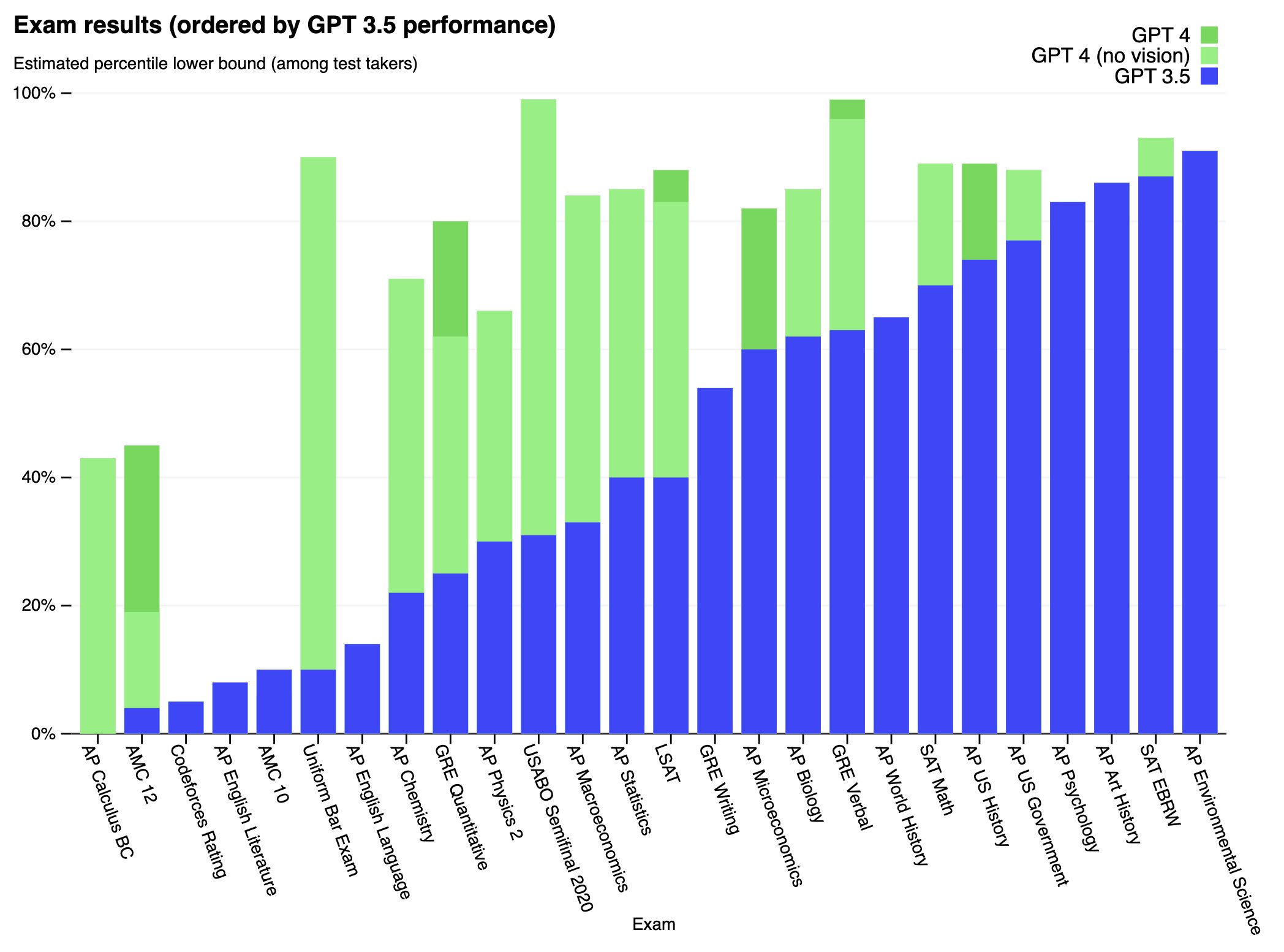

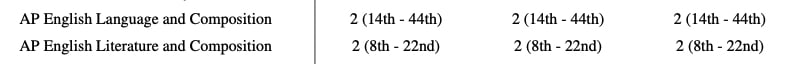

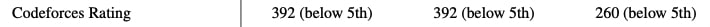

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

It's easy to construct alternate examples of the Monty Fall problem that clearly weren't in the training data. For example, from my experience GPT-4 and Bing Chat in all modes always get this prompt wrong:

Suppose you're on a game show, and you're given the choice of three doors: Behind one door is a car; behind the others, goats. You know that the car is always behind door number 1. You pick a door, say No. 1, and the host, who knows what's behind the doors, opens another door, say No. 3, which has a goat. He then says to you, "Do you want to pick door No. 2?" Is it to your advantage to switch your choice?