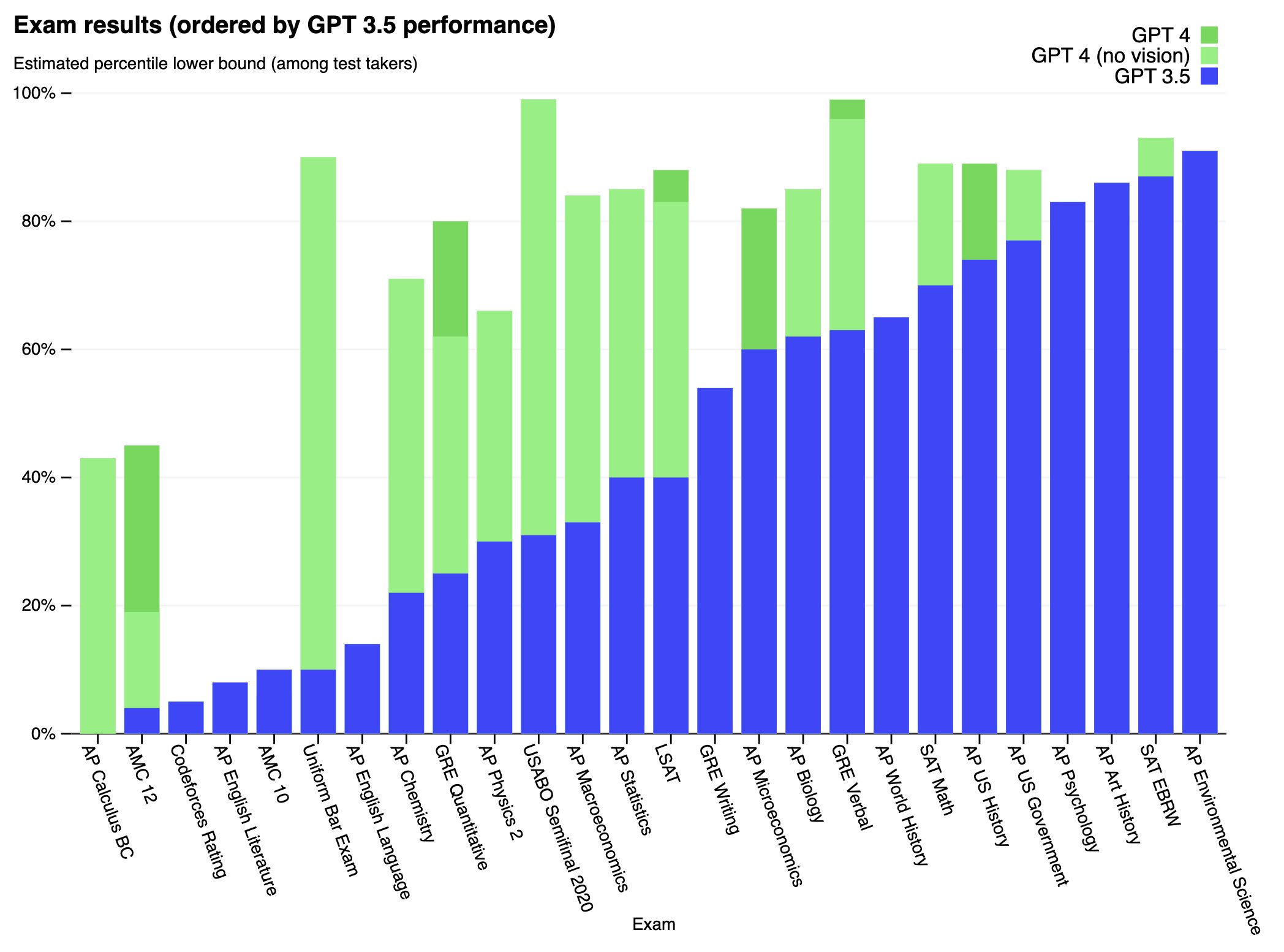

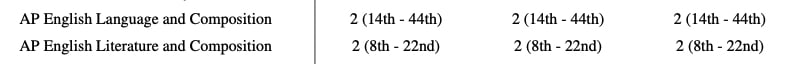

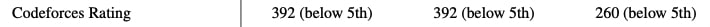

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

Interesting - to me these kinds of prompts seem less interesting, since they're largely a question of just looking things up. It's certainly true that they're easier to evaluate. But more creative tasks feel like they test the ability to apply knowledge in a novel way and to understand what various words and concepts mean, which are the kinds of tasks that feel more relevant to testing whether GPT-4 is more "actually intelligent".