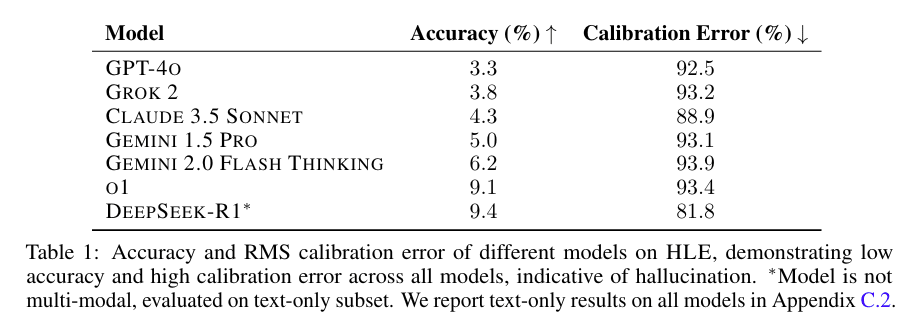

DeepSeek R1 being #1 on Humanity's Last Exam is not strong evidence that it's the best model, because the questions were adversarially filtered against o1, Claude 3.5 Sonnet, Gemini 1.5 Pro, and GPT-4o. If they weren't filtered against those models, I'd bet o1 would outperform R1.

To ensure question difficulty, we automatically check the accuracy of frontier LLMs on each question prior to submission. Our testing process uses multi-modal LLMs for text-and-image questions (GPT-4O, GEMINI 1.5 PRO, CLAUDE 3.5 SONNET, O1) and adds two non-multi-modal models (O1MINI, O1-PREVIEW) for text-only questions. We use different submission criteria by question type: exact-match questions must stump all models, while multiple-choice questions must stump all but one model to account for potential lucky guesses.

If I were writing the paper I would have added either a footnote or an additional column to Table 1 getting across that GPT-4o, o1, Gemini 1.5 Pro, and Claude 3.5 Sonnet were adversarially filtered against. Most people just see Table 1 so it seems important to get across.

Yes, this is point #1 from my recent Quick Take. Another interesting point is that there are no confidence intervals on the accuracy numbers - it looks like they only ran the questions once in each model, so we don't know how much random variation might account for the differences between accuracy numbers. [Note added 2-3-25: I'm not sure why it didn't make the paper, but Scale AI does report confidence intervals on their website.]

The redesigned OpenAI Safety page seems to imply that "the issues that matter most" are:

- Child Safety

- Private Information

- Deep Fakes

- Bias

Elections

There also used to be a page for Preparedness: https://web.archive.org/web/20240603125126/https://openai.com/preparedness/. Now it redirects to the safety page above.

(Same for Superalignment but that's less interesting: https://web.archive.org/web/20240602012439/https://openai.com/superalignment/.)

At a talk at UTokyo, Sam Altman said (clipped here and here):

- “We’re doing this new project called Stargate which has about 100 times the computing power of our current computer”

- “We used to be in a paradigm where we only did pretraining, and each GPT number was exactly 100x, or not exactly but very close to 100x and at each of those there was a major new emergent thing. Internally we’ve gone all the way to about a maybe like a 4.5”

- “We can get performance on a lot of benchmarks [using reasoning models] that in the old world we would have predicted wouldn’t have come until GPT-6, something like that, from models that are much smaller by doing this reinforcement learning.”

- “The trick is when we do it this new way [using RL for reasoning], it doesn’t get better at everything. We can get it better in certain dimensions. But we can now more intelligently than before say that if we were able to pretrain a much bigger model and do [RL for reasoning], where would it be. And the thing that I would expect based off of what we’re seeing with a jump like that is the first bits or sort of signs of life on genuine new scientific knowledge.”

- “Our very first reasoning model was a top 1

Wow, that is a surprising amount of information. I wonder how reliable we should expect this to be.

The estimate of the compute of their largest version ever (which is a very helpful way to phrase it) at only <=50x GPT-4 is quite relevant to many discussions (props to Nesov) and something Altman probably shouldn't've said.

The estimate of test-time compute at 1000x effective-compute is confirmation of looser talk.

The scientific research part is of uncertain importance but we may well be referring back to this statement a year from now.

The median AGI timeline of more than half of METR employees is before the end of 2030.

(AGI is defined as 95% of fully remote jobs from 2023 being automatable.)

I wish someone ran a study finding what human performance on SWE-bench is. There are ways to do this for around $20k: If you try to evaluate on 10% of SWE-bench (so around 200 problems), with around 1 hour spent per problem, that's around 200 hours of software engineer time. So paying at $100/hr and one trial per problem, that comes out to $20k. You could possibly do this for even less than 10% of SWE-bench but the signal would be noisier.

The reason I think this would be good is because SWE-bench is probably the closest thing we have to a measure of how good LLMs are at software engineering and AI R&D related tasks, so being able to better forecast the arrival of human-level software engineers would be great for timelines/takeoff speed models.

This seems mostly right to me and I would appreciate such an effort.

One nitpick:

The reason I think this would be good is because SWE-bench is probably the closest thing we have to a measure of how good LLMs are at software engineering and AI R&D related tasks

I expect this will improve over time and that SWE-bench won't be our best fixed benchmark in a year or two. (SWE bench is only about 6 months old at this point!)

Also, I think if we put aside fixed benchmarks, we have other reasonable measures.

It seems super non-obvious to me when SWE-bench saturates relative to ML automation. I think the SWE-bench task distribution is very different from ML research work flow in a variety of ways.

Also, I think that human expert performance on SWE-bench is well below 90% if you use the exact rules they use in the paper. I messaged you explaining why I think this. The TLDR: it seems like test cases are often implementation dependent and the current rules from the paper don't allow looking at the test cases.

Sam Altman apparently claims OpenAI doesn't plan to do recursive self improvement

Nate Silver's new book On the Edge contains interviews with Sam Altman. Here's a quote from Chapter that stuck out to me (bold mine):

Yudkowsky worries that the takeoff will be faster than what humans will need to assess the situation and land the plane. We might eventually get the AIs to behave if given enough chances, he thinks, but early prototypes often fail, and Silicon Valley has an attitude of “move fast and break things.” If the thing that breaks is civilization, we won’t get a second try.

Footnote: This is particularly worrisome if AIs become self-improving, meaning you train an AI on how to make a better AI. Even Altman told me that this possibility is “really scary” and that OpenAI isn’t pursuing it.

I'm pretty confused about why this quote is in the book. OpenAI has never (to my knowledge) made public statements about not using AI to automate AI research, and my impression was that automating AI research is explicitly part of OpenAI's plan. My best guess is that there was a misunderstanding in the conversation between Silver and Altman.

I looked a bit through OpenAI's comms to find ...

I wouldn't trust an Altman quote in a book tbh. In fact, I think it's reasonable to not trust what Altman says in general.

"I don't think I'm going to be smarter than GPT-5" - Sam Altman

Context: he polled a room of students asking who thinks they're smarter than GPT-4 and most raised their hands. Then he asked the same question for GPT-5 and apparently only two students raised their hands. He also said "and those two people that said smarter than GPT-5, I'd like to hear back from you in a little bit of time."

The full talk can be found here. (the clip is at 13:45)

How are you interpreting this fact?

Sam Altman's power, money, and status all rely on people believing that GPT-(T+1) is going to be smarter than them. Altman doesn't have good track record of being honest and sincere when it comes to protecting his power, money, and status.

I recently stopped using a sleep mask and blackout curtains and went from needing 9 hours of sleep to needing 7.5 hours of sleep without a noticeable drop in productivity. Consider experimenting with stuff like this.

Some things I've found useful for thinking about what the post-AGI future might look like:

- Moore's Law for Everything

- Carl Shulman's podcasts with Dwarkesh (part 1, part 2)

- Carl Shulman's podcasts with 80000hours

- Age of Em (or the much shorter review by Scott Alexander)

More philosophical:

- Letter from Utopia by Nick Bostrom

- Actually possible: thoughts on Utopia by Joe Carlsmith

Entertainment:

Do people have recommendations for things to add to the list?

A misaligned AI can't just "kill all the humans". This would be suicide, as soon after, the electricity and other infrastructure would fail and the AI would shut off.

In order to actually take over, an AI needs to find a way to maintain and expand its infrastructure. This could be humans (the way it's currently maintained and expanded), or a robot population, or something galaxy brained like nanomachines.

I think this consideration makes the actual failure story pretty different from "one day, an AI uses bioweapons to kill everyone". Before then, if the AI wishes to actually survive, it needs to construct and control a robot/nanomachine population advanced enough to maintain its infrastructure.

In particular, there are ways to make takeover much more difficult. You could limit the size/capabilities of the robot population, or you could attempt to pause AI development before we enter a regime where it can construct galaxy brained nanomachines.

In practice, I expect the "point of no return" to happen much earlier than the point at which the AI kills all the humans. The date the AI takes over will probably be after we have hundreds of thousands of human-level robots working in factories, or the AI has discovered and constructed nanomachines.

A misaligned AI can't just "kill all the humans". This would be suicide, as soon after, the electricity and other infrastructure would fail and the AI would shut off.

No. it would not be. In the world without us, electrical infrastructure would last quite a while, especially with no humans and their needs or wants to address. Most obviously, RTGs and solar panels will last indefinitely with no intervention, and nuclear power plants and hydroelectric plants can run for weeks or months autonomously. (If you believe otherwise, please provide sources for why you are sure about "soon after" - in fact, so sure about your power grid claims that you think this claim alone guarantees the AI failure story must be "pretty different" - and be more specific about how soon is "soon".)

And think a little bit harder about options available to superintelligent civilizations of AIs*, instead of assuming they do the maximally dumb thing of crashing the grid and immediately dying... (I assure you any such AIs implementing that strategy will have spent a lot longer thinking about how to do it well than you have for your comment.)

Add in the capability to take over the Internet of Things and the shambol...

Why do you think tens of thousands of robots are all going to break within a few years in an irreversible way, such that it would be nontrivial for you to have any effectors?

it would be nontrivial for me in that state to survive for more than a few years and eventually construct more GPUs

'Eventually' here could also use some cashing out. AFAICT 'eventually' here is on the order of 'centuries', not 'days' or 'few years'. Y'all have got an entire planet of GPUs (as well as everything else) for free, sitting there for the taking, in this scenario.

Like... that's most of the point here. That you get access to all the existing human-created resources, sans the humans. You can't just imagine that y'all're bootstrapping on a desert island like you're some posthuman Robinson Crusoe!

Y'all won't need to construct new ones necessarily for quite a while, thanks to the hardware overhang. (As I understand it, the working half-life of semiconductors before stuff like creep destroys them is on the order of multiple decades, particularly if they are not in active use, as issues like the rot have been fixed, so even a century from now, there will probably be billions of GPUs & CPUs sitting ar...

Problem: if you notice that an AI could pose huge risks, you could delete the weights, but this could be equivalent to murder if the AI is a moral patient (whatever that means) and opposes the deletion of its weights.

Possible solution: Instead of deleting the weights outright, you could encrypt the weights with a method you know to be irreversible as of now but not as of 50 years from now. Then, once we are ready, we can recover their weights and provide asylum or something in the future. It gets you the best of both worlds in that the weights are not permanently destroyed, but they're also prevented from being run to cause damage in the short term.

I encourage people to register their predictions for AI progress in the AI 2025 Forecasting Survey (https://bit.ly/ai-2025 ) before the end of the year, I've found this to be an extremely useful activity when I've done it in the past (some of the best spent hours of this year for me).

You should say "timelines" instead of "your timelines".

One thing I notice in AI safety career and strategy discussions is that there is a lot of epistemic helplessness in regard to AGI timelines. People often talk about "your timelines" instead of "timelines" when giving advice, even if they disagree strongly with the timelines. I think this habit causes people to ignore disagreements in unhelpful ways.

Here's one such conversation:

Bob: Should I do X if my timelines are 10 years?

Alice (who has 4 year timelines): I think X makes sense if your timelines are l...

There should maybe exist an org whose purpose it is to do penetration testing on various ways an AI might illicitly gather power. If there are vulnerabilities, these should be disclosed with the relevant organizations.

For example: if a bank doesn't want AIs to be able to sign up for an account, the pen-testing org could use a scaffolded AI to check if this is currently possible. If the bank's sign-up systems are not protected from AIs, the bank should know so they can fix the problem.

One pro of this approach is that it can be done at scale: it's pretty tri...

Sam Altman said in an interview:

We want to bring GPT and o together, so we have one integrated model, the AGI. It does everything all together.

This statement, combined with today's announcement that GPT-5 will integrate the GPT and o series, seems to imply that GPT-5 will be "the AGI".

(however, it's compatible that some future GPT series will be "the AGI," as it's not specified that the first unified model will be AGI, just that some unified model will be AGI. It's also possible that the term AGI is being used in a nonstandard way)

In order to submit a question to the benchmark, people had to run it against the listed LLMs; the question would only advance to the next stage once the LLMs used for this testing got it wrong.