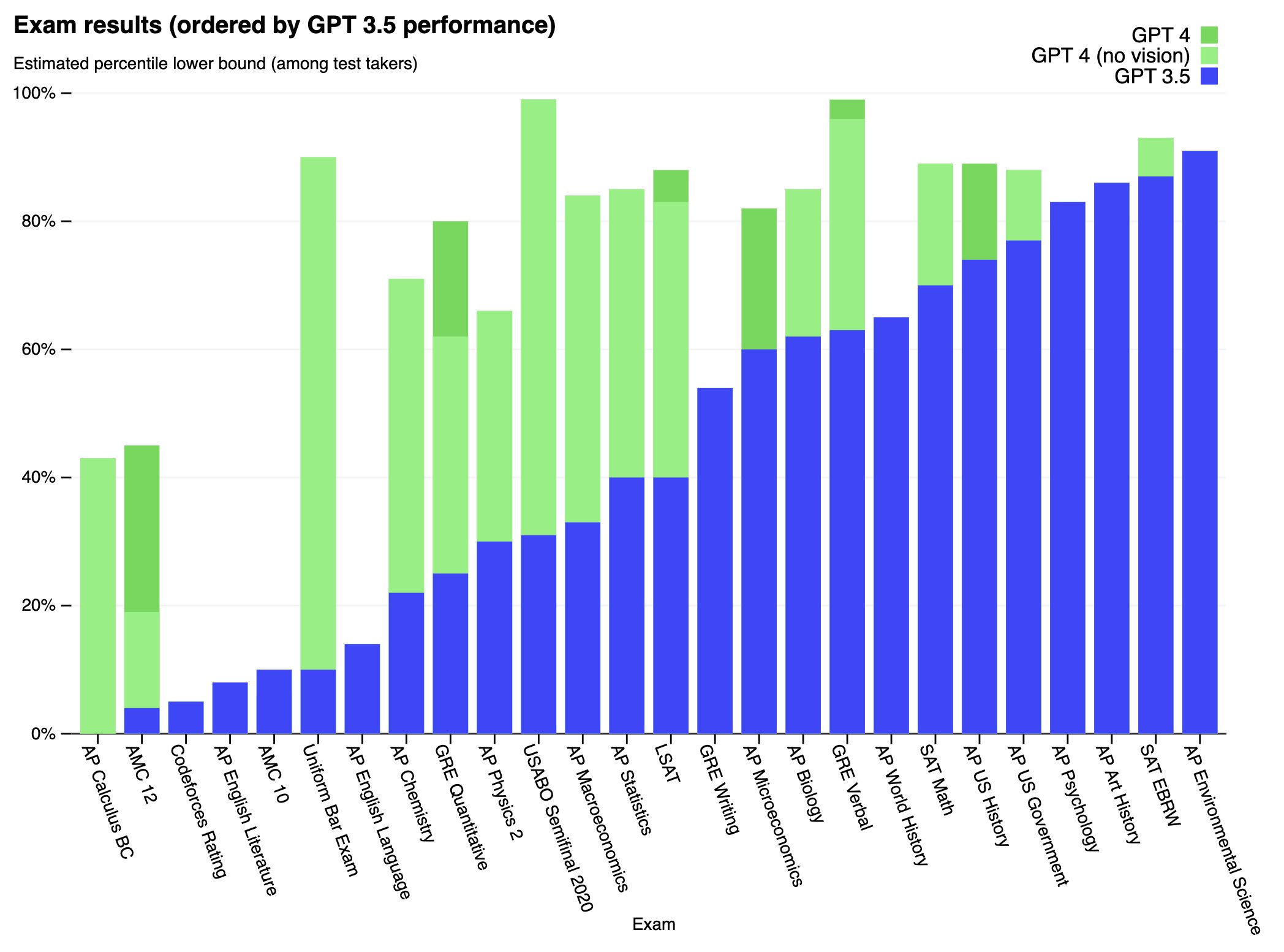

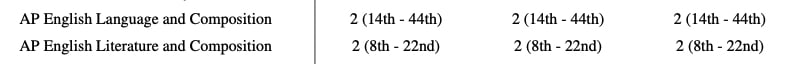

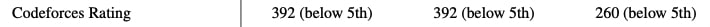

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while worse than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

Full paper available here: https://cdn.openai.com/papers/gpt-4.pdf

Not at all. I may have misunderstood what they did but it seemed rather like giving a toddler a loaded gun and being happy they weren’t able to shoot it. Is it actually wise to give a likely unaligned AI with poorly defined capabilities access to something like taskrabbit to see if it does anything dangerous? Isn’t this the exact scenario people on this forum are afraid of?