I believe there are people with far greater knowledge than me that can point out where I am wrong. Cause I do believe my reasoning is wrong, but I can not see why it would be highly unfeasible to train a sub-AGI intelligent AI that most likely will be aligned and able to solve AI alignment.

My assumptions are as follows:

- Current AI seems aligned to the best of its ability.

- PhD level researchers would eventually solve AI alignment if given enough time.

- PhD level intelligence is below AGI in intelligence.

- There is no clear reason why current AI using current paradigm technology would become unaligned before reaching PhD level intelligence.

- We could train AI until it reaches PhD level intelligence, and then let it solve AI Alignment, without itself needing to self improve.

The point I am least confident in, is 4, since we have no clear way of knowing at what intelligence level an AI model would become unaligned.

Multiple organisations seem to already think that training AI that solves alignment for us is the best path (e.g. superalignment).

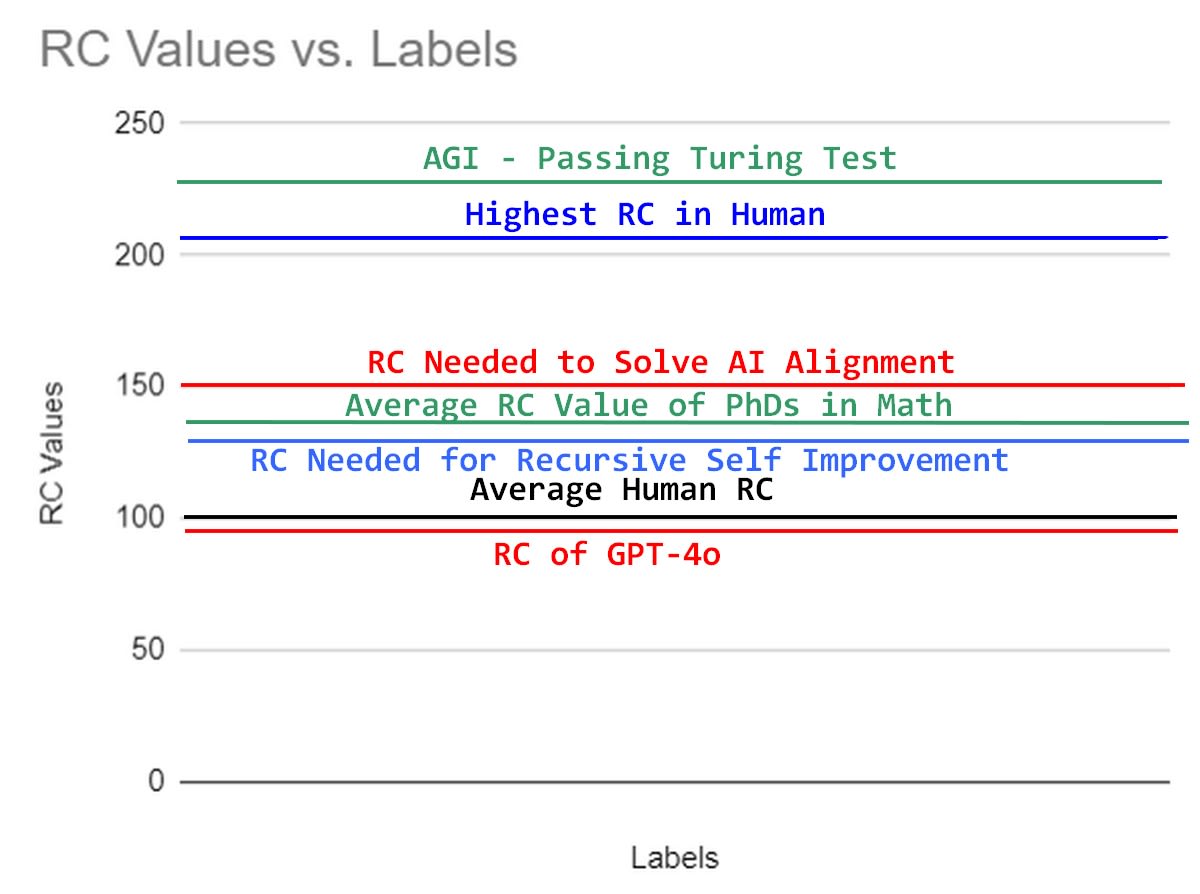

Attached is my mental model of what intelligence different tasks require, and different people have.

Figure 1: My mental model of natural research capability RC (basically IQ with higher correlation for research capabilities), where intelligence needed to align AI is above average PhD level, but below smartest human in the world, and even further from AGI.

I think what it's highlighting is that there's a missing assumption. An analogy: Aristotle (with just the knowledge he historically had) might struggle to outsource the design of a quantum computer to a bunch of modern physics PhDs because (a) Aristotle lacks even the conceptual foundation to know what the objective is, (b) Aristotle has no idea what to ask for, (c) Aristotle has no ability to check their work because he has flatly wrong priors about which assumptions the physicists make are/aren't correct. The solution would be for Aristotle to go learn a bunch of quantum mechanics (possibly with some help from the physics PhDs) before even attempting to outsource the actual task. (And likely Aristotle himself would struggle with even the problem of learning quantum mechanics; he would likely give philosophical objections all over the place and then be wrong.)