Progress in AI has largely been a function of increasing compute, human software research efforts, and serial time/steps. Throwing more compute at researchers has improved performance both directly and indirectly (e.g. by enabling more experiments, refining evaluation functions in chess, training neural networks, or making algorithms that work best with large compute more attractive).

Historically compute has grown by many orders of magnitude, while human labor applied to AI and supporting software by only a few. And on plausible decompositions of progress (allowing for adjustment of software to current hardware and vice versa), hardware growth accounts for more of the progress over time than human labor input growth.

So if you're going to use an AI production function for tech forecasting based on inputs (which do relatively OK by the standards tech forecasting), it's best to use all of compute, labor, and time, but it makes sense for compute to have pride of place and take in more modeling effort and attention, since it's the biggest source of change (particularly when including software gains downstream of hardware technology and expenditures).

Thinking about hardware h...

I commend this comment and concur with the importance of hardware, the straw-manning of Moravec, etc.

However I do think that EY had a few valid criticisms of Ajeya's model in particular - it ends up smearing probability mass over many anchors or sub-models, most of which are arguably poorly grounded in deep engineering knowledge. And yes you can use it to create your own model, but most people won't do that and are just looking at the default median conclusion.

Moore's Law is petering out as we run up against the constraints of physics for practical irreversible computers, but the brain is also - at best - already at those same limits. So that should substantially reduce uncertainty concerning the hardware side (hardware parity now/soon), and thus place most of the uncertainty around software/algorithm iteration progress. The important algorithmic advances tend to change asymptotic scaling curvature rather than progress linearly, and really all the key uncertainty is over that - which I think is what EY is gesturing at, and rightly so.

Historically compute has grown by many orders of magnitude, while human labor applied to AI and supporting software by only a few. And on plausible decompositions of progress (allowing for adjustment of software to current hardware and vice versa), hardware growth accounts for more of the progress over time than human labor input growth.

So if you're going to use an AI production function for tech forecasting based on inputs (which do relatively OK by the standards tech forecasting), it's best to use all of compute, labor, and time, but it makes sense for compute to have pride of place and take in more modeling effort and attention, since it's the biggest source of change (particularly when including software gains downstream of hardware technology and expenditures).

I don't understand the logical leap from "human labor applied to AI didn't grow much" to "we can ignore human labor". The amount of labor invested in AI research is related to the the time derivative of progress on the algorithms axis. Labor held constant is not the same as algorithms held constant. So, we are still talking about the problem of predicting when AI-capability(algorithms(t),compute(t)) reaches human...

I will have to look at these studies in detail in order to understand, but I'm confused how can this pass some obvious tests. For example, do you claim that alpha-beta pruning can match AlphaGo given some not-crazy advantage in compute? Do you claim that SVMs can do SOTA image classification with not-crazy advantage in compute (or with any amount of compute with the same training data)? Can Eliza-style chatbots compete with GPT3 however we scale them up?

My model is something like:

In this model, it makes sense to think of the "contribution" of new algorithms as the factor they enable more efficient conversion of compute to performance and count the increased performance because the new algorithms can absorb m...

What Moravec says is merely that $1k human-level compute will become available in the '2020s', and offers several different trendline extrapolations: only the most aggressive puts us at cheap human-level compute in 2020/2021 (note the units on his graph are in decades). On the other extrapolations, we don't hit cheap human-compute until the end of the decade. He also doesn't commit to how long it takes to turn compute into powerful systems, it's more of a pre-requisite: only once the compute is available can R&D really start, same way that DL didn't start instantly in 2010 when various levels of compute/$ were hit. Seeds take time to sprout, to use his metaphor.

I think this is uncharitable and most likely based on a misreading of Moravec. (And generally with gwern on this one.)

As far as I can tell, the source for your attribution of this "prediction" is:

If this rate of improvement were to continue into the next century, the 10 teraops required for a humanlike computer would be available in a $10 million supercomputer before 2010 and in a $1,000 personal computer by 2030."

As far as I could tell it sounds from the surrounding text like his "prediction" for transformative impacts from AI was something like "between 2010 and 2030" with broad error bars.

Adding to what Paul said: jacob_cannell points to this comment which claims that in Mind Children Moravec predicted human-level AGI in 2028.

Moravec, "Mind Children", page 68: "Human equivalence in 40 years". There he is actually talking about human-level intelligent machines arriving by 2028 - not just the hardware you would theoretically require to build one if you had the ten million dollars to spend on it.

I just went and skimmed Mind Children. He's predicting human-equivalent computational power on a personal computer in 40 years. He seems to say that humans will within 50 years be surpassed in every important way by machines (page 70, below), but I haven't found a more precise or short-term statement yet.

The robot who will work alongside us in half a century will have some interesting properties. Its reasoning abilities should be astonishingly better than a human's—even today's puny systems are much better in some areas. But its perceptual and motor abilities will probably be comparable to ours. Most interestingly, this artificial person will be highly changeable, both as an individual and from one of its generations to the next. But solitary, toiling robots, however compet...

Here are the graphs from Hippke (he or I should publish summary at some point, sorry).

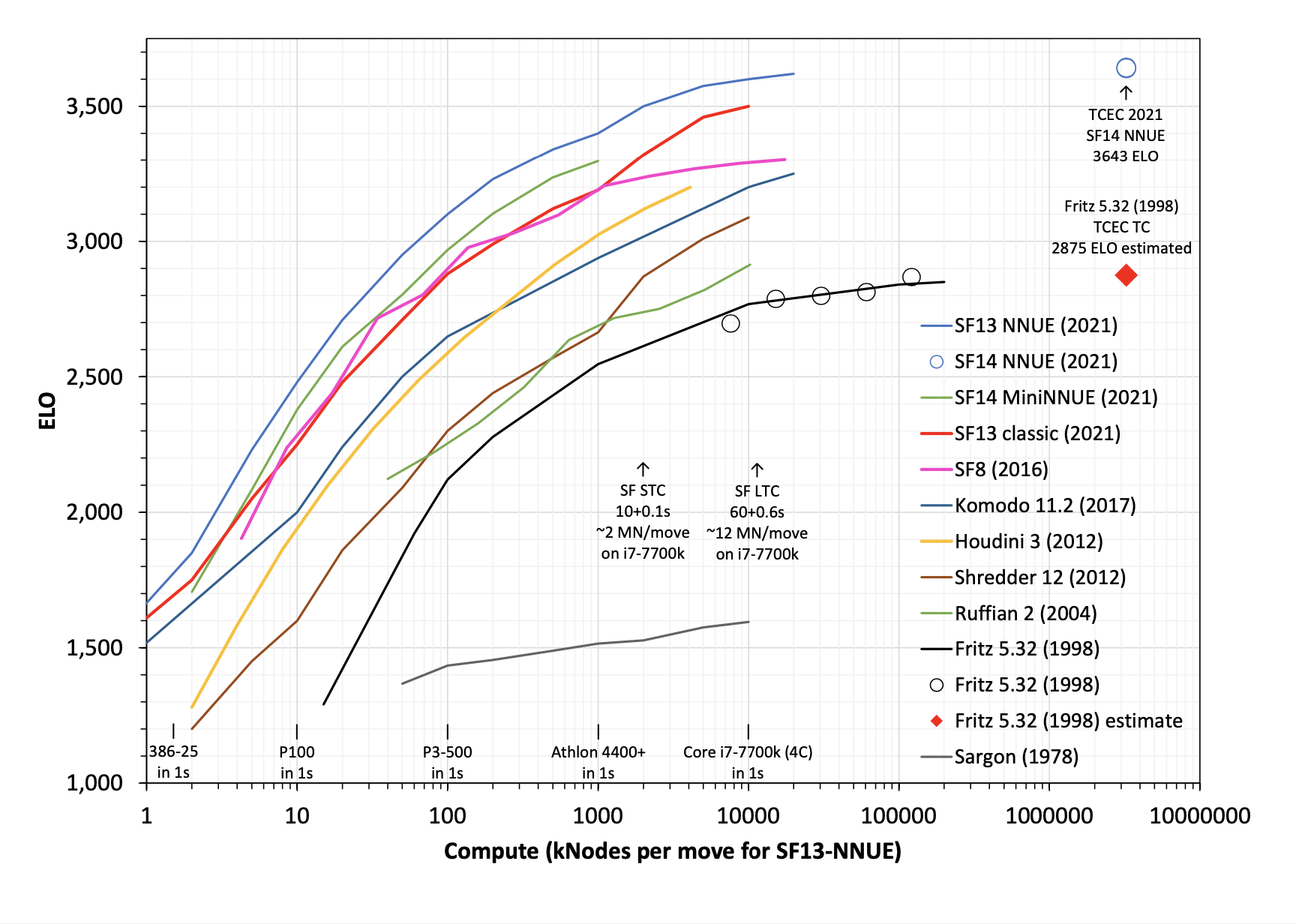

I wanted to compare Fritz (which won WCCC in 1995) to a modern engine to understand the effects of hardware and software performance. I think the time controls for that tournament are similar to SF STC I think. I wanted to compare to SF8 rather than one of the NNUE engines to isolate out the effect of compute at development time and just look at test-time compute.

So having modern algorithms would have let you win WCCC while spending about 50x less on compute than the winner. Having modern computer hardware would have let you win WCCC spending way more than 1000x less on compute than the winner. Measured this way software progress seems to be several times less important than hardware progress despite much faster scale-up of investment in software.

But instead of asking "how well does hardware/software progress help you get to 1995 performance?" you could ask "how well does hardware/software progress get you to 2015 performance?" and on that metric it looks like software progress is way more important because you basically just can't scale old algorithms up to modern performance.

The relevant measure...

Meta-comment:

I noticed that I found it very difficult to read through this post, even though I felt the content was important, because of the (deliberately) condescending style. I also noticed that I'm finding it difficult to take the ideas as seriously as I think I should, again due to the style. I did manage to read through it in the end, because I do think it's important, and I think I am mostly able to avoid letting the style influence my judgments. But I find it fascinating to watch my own reaction to the post, and I'm wondering if others have any (constructive) insights on this.

In general I I've noticed that I have a very hard time reading things that are written in a polemical, condescending, insulting, or ridiculing manner. This is particularly true of course if the target is a group / person / idea that I happen to like. But even if it's written by someone on "my side" I find I have a hard time getting myself to read it. There have been several times when I've been told I should really go read a certain book, blog, article, etc., and that it has important content I should know about, but I couldn't get myself to read the whole thing due to the polemical or insulting way in...

Things I instinctively observed slash that my model believes that I got while reading that seem relevant, not attempting to justify them at this time:

I would very much like to read your attempt at conveying the core thing - if nothing else, it'll give another angle from which to try to grasp it.

I find it concerning that you felt the need to write "This is not at all a criticism of the way this post was written. I am simply curious about my own reaction to it" (and still got downvoted?).

For my part, I both believe that this post contains valuable content and good arguments, and that it was annoying / rude / bothersome in certain sections.

I had a pretty strong negative reaction to it. I got the feeling that the post derives much of its rhetorical force from setting up an intentionally stupid character who can be condescended to, and that this is used to sneak in a conclusion that would seem much weaker without that device.

When I try to mentally simulate negative reader-reactions to the dialogue, I usually get a complicated feeling that's some combination of:

How right/wrong do you think this is, as a model of what makes the dialogue harder or less pleasant to read from your perspective?

(I feel a little wary of stating my model above, since (a) maybe it's totally off, and (b) it can be rude to guess at other people's mental states. But so far this conversation has felt very abstract to me, so maybe this can at least serve as a prompt to go more concrete. E.g., 'I find it hard to read condescending things' is very vague about which parts of the dialogue we're talking about, about what makes them feel condescending, and about how the feeling-of-condescension affects the sentence-parsing-and-evaluating experience.)

I think part of what I was reacting to is a kind of half-formed argument that goes something like:

Again, this isn't actually an argument I would make. It's just me trying to articulate my initial negative reactions to the post.

I had mixed feelings about the dialogue personally. I enjoy the writing style and think Eliezer is a great writer with a lot of good opinions and arguments, which made it enjoyable.

But at the same time, it felt like he was taking down a strawman. Maybe you’d label it part of “conflict aversion”, but I tend to get a negative reaction to take-downs of straw-people who agree with me.

To give an unfair and exaggerated comparison, it would be a bit like reading a take-down of a straw-rationalist in which the straw-rationalist occasionally insists such things as “we should not be emotional” or “we should always use Bayes’ Theorem in every problem we encounter.” It should hopefully be easy to see why a rationalist might react negatively to reading that sort of dialogue.

My posting this comment will be contrary to the moderation disclaimer advising not to talk about tone. But FWIW, I react similarly and I skip reading things written in this way, interpreting them as manipulating me into believing the writer is hypercompetent.

I've gotten one private message expressing more or less the same thing about this post, so I don't think this is a super unusual reaction.

Short summary: Biological anchors are a bad way to predict AGI. It’s a case of “argument from comparable resource consumption.” Analogy: human brains use 20 Watts. Therefore, when we have computers with 20 Watts, we’ll have AGI! The 2020 OpenPhil estimate of 2050 is based on a biological anchor, so we should ignore it.

Longer summary:

Lots of folks made bad AGI predictions by asking:

To find (1), they use a “biological anchor,” like the computing power of the human brain, or the total compute used to evolve human brains.

Hans Moravec, 1988: the human brain uses 10^13 ops/s, and computers with this power will be available in 2010.

Eliezer objects that:

Heartened by a strong-upvote for my attempt at condensing Eliezer's object-level claim about timeline estimates, I shall now attempt condensing Eliezer's meta-level "core thing".

Making a map of your map is another one of those techniques that seem to provide more grounding but do not actually.

Sounds to me like one of the things Eliezer is pointing at in Hero Licensing:

Look, thinking things like that is just not how the inside of my head is organized. There’s just the book I have in my head and the question of whether I can translate that image into reality. My mental world is about the book, not about me.

You do want to train your brain, and you want to understand your strengths and weaknesses. But dwelling on your biases at the expense of the object level isn't actually usually the best way to give your brain training data and tweak its performance.

I think there's a lesson here that, e.g., Scott Alexander hadn't fully internalized as of his 2017 Inadequate Equilibria review. There's a temptation to "go meta" and find some cleaner, more principled, more objective-sounding algorithm to follow than just "learn lots and lots of object-level facts so you can keep refining your model, learn some facts about your brain too so you can know how much to trust it in different domains, and just keep doing that".

But in fact there's no a priori reason to expect there to...

This is good enough that it should be a top level post.

The prosthesis vs. strength training dichotomy in particular seems extremely important.

The two extracts from this post that I found most interesting/helpful:

...The problem is that the resource gets consumed differently, so base-rate arguments from resource consumption end up utterly unhelpful in real life. The human brain consumes around 20 watts of power. Can we thereby conclude that an AGI should consume around 20 watts of power, and that, when technology advances to the point of being able to supply around 20 watts of power to computers, we'll get AGI?

I'm saying that Moravec's "argument from comparable resource consumption" must be in general invalid, because it Proves Too Much. If it's in general valid to reason about comparable resource consumption, then it should be equally valid to reason from energy consumed as from computation consumed, and pick energy consumption instead to call the basis of your median estimate.

You say that AIs consume energy in a very different way from brains? Well, they'll also consume computations in a very different way from brains! The only difference between these two cases is that you know something about how humans eat food and break it down in their stomachs and convert it into ATP that gets consumed by

I wrote a lengthy exegesis of Humbali's confusion around “maximum entropy”, which I decided ended up somewhere between a comment and a post in terms of quality, so I put it here in "Shortform". I'm new to contributing content on AF, so meta-level feedback about how best to use the different channels (commenting, shortform, posting) is welcome.

This is a long post that I have only skimmed.

It seems like the ante, in support of any claim like "X forecasting method doesn't work [historically]," is to compare it to some other forecasting method on offer---whatever is being claimed as the default to be used in X's absence. (edited to add "historically")

It looks to me like historical forecasts that look like biological anchors have fared relatively well compared to the alternatives, but I could easily be moved by someone giving some evidence about what kind of methodology would have worked well or poorly, or what kinds of forecasts were actually being made at the time.

The methodological points in this post may be sound even if it's totally ungrounded in track record, but at that point the title is mostly misleading clickbait.

(My main complaint in this comment is "what's the alternative you are comparing to?" That said, the particular claims about Moravec's views also look fairly uncharitable to me, as Carl has pointed out and I've raised to Eliezer. It does not seem to me from reading Moravec's writing in 1988 like he expects TAI in 2010 rather than 2030. Maybe Eliezer is better at understanding what people are saying. But he often describes the views of people in 2020, who I can talk to and confirm, and does not in fact do a good job.)

I think it's fine to say that you think something else is better without being able to precisely say what it is. I just think "the trick that never works" is an overstatement if you aren't providing evidence about whether it has worked, and that it's hard to provide such evidence without saying something about what you are comparing to.

(Like I said though, I just skimmed the post and it's possible it contains evidence or argument that I didn't catch.)

It's possible the action is in disagreements about Moravec's view rather than the lack of an alternative, but it's hard to say because it's unclear how good Eliezer thinks the alternative is. I think that looking at Moravec's view in hindsight it doesn't seem crazy at all, and e.g. I think it's more reasonable than Eliezer's views from 10 years later.

I think this does not do justice to Ajeya's bio anchors model. Carl already said the important bits, but here are some more points:

But, if you insist on the error of anchoring on biology, you could perhaps do better by seeing a spectrum between two bad anchors. This lets you notice a changing reality, at all, which is why I regard it as a helpful thing to say to you and not a pure persuasive superweapon of unsound argument. Instead of just fixating on one bad anchor, the hybrid of biological anchoring with whatever knowledge you currently have about optimization, you can notice how reality seems to be shifting between two biological bad anchors over time, and so have an eye on the changing reality at all. Your new estimate in terms of gradient descent is stepping away from evolutionary computation and toward the individual-brain estimate by ten orders of magnitude, using the fact that you now know a little more about optimization than natural selection knew; and now that you can see the change in reality over time, in terms of the two anchors, you can wonder if there are more shifts ahead.

This is exactly what the bio anchors framework is already doing? It has the ...

My take on the exercise:

Is Humbali right that generic uncertainty about maybe being wrong, without other extra premises, should increase the entropy of one's probability distribution over AGI, thereby moving out its median further away in time?

Short version: Nah. For example, if you were wrong by dint of failing to consider the right hypothesis, you can correct for it by considering predictable properties of the hypotheses you missed (even if you don't think you can correctly imagine the true research pathway or w/e in advance). And if you were wrong in your calculations of the quantities you did consider, correction will regress you towards your priors, which are simplicity-based rather than maxent.

Long version: Let's set aside for the moment the question of what the "correct" maxent distribution on AGI timelines is (which, as others have noted, depends a bit on how you dice up the space of possible years). I don't think this is where the action is, anyway.

Let's suppose that we're an aspiring Bayesian considering that we may have made some mistakes in our calculations. Where might those mistakes have been? Perhaps:

In my view, the biological anchors and the Very Serious estimates derived therefrom are really useful for the following very narrow yet plausibly impactful purpose: persuading people, whose intuitive sense of biological anchors as lower bounds leads their AGI timeline to stretch out to 2100 or beyond, that they should really have shorter timelines. This is almost touched on in the dialogue where the OpenPhil character says “isn’t this at least a soft upper bound?” but Eliezer dismisses it as neither an upper nor a lower bound. I don’t disagree with Eliezer’s actual claim there, but I think he may be missing the value of putting well-researched soft upper bounds on a bunch of variables that generally well-read people often perceive as lower bounds on AGI’s arrival time—and guess to be much further away than they are. Even if those variables provide almost no relevant evidence to someone who has the appropriate kind of uncertainty about AGI’s compute requirements as a function of humanity’s knowledge about AI.

From this perspective, aggregating all the biological anchors with mixture weights tuned to produce a distribution whose median matches the psychological plausibility of Platt’s Law is a way to make the report as a whole Overton-window-compatible, while enabling readers to widen their distribution enough to put substantial probability mass on sooner years than the Overton window would have permitted, or even to decide for themselves to down-weight the larger biological anchors and shift their entire distribution sooner.

In my view, the biological anchors and the Very Serious estimates derived therefrom are really useful for the following very narrow yet plausibly impactful purpose

I don't understand why it's not just useful directly. Saying that the numbers are not true upper or lower bounds seems like it's expecting way too much!

They're not even labeled as bounds (at least in the headline). They're supposed to be "anchors".

Suppose you'd never done the analysis to know how much compute a human brain uses, or how much compute all of evolution had used. Wouldn't this report be super useful to you?

Sure, it doesn't directly tell you when TAI is going to come, because there's a separate thing you don't know, which is how compute-efficient our systems are going to be compared to the human brain. And also that translation factor is changing with time. But surely that's another quantity we can have a distribution over.

If there's some quantity that we don't know the value of, but we have at least one way to estimate it using some other uncertain quantities, why is it not useful to reduce our uncertainty about some of those other quantities?

This seems like exactly the kind of thing superforecasters are suppo...

Eliezer’s main point of his ~20k words isn’t really what I want to defend, but I will say a few words about how I would constructively interpret it. I think Eliezer’s main claim is that he has an intuitive system-1 model of the inhomogeneous Poisson process that emits working AGI systems, and that this model isn’t informed by the compute equivalents of biological anchors, and that his lack of being informed by that isn’t a mistake. I’m not sure if he’s actually making the stronger claim that anyone whose model is informed by the biological anchors is making a mistake, but if so, I don’t agree. My own model is somewhat informed by biological anchors; it’s more informed by what the TAI report calls “subjective impressiveness extrapolation”, extrapolations on benchmark performance, and some vague sense of other point processes that emit AI winters and major breakthroughs. Someone who has total Knightean uncertainty and no intuitive models of how AGI comes about would surely do well to adopt OpenPhil’s distribution as a prior.

I feel like a big crux is whether Platt's Law is true:

Eliezer: I mean, in fact, part of my actual sense of indignation at this whole affair, is the way that Platt's law of strong AI forecasts - which was in the 1980s generalizing "thirty years" as the time that ends up sounding "reasonable" to would-be forecasters - is still exactly in effect for what ends up sounding "reasonable" to would-be futurists, in fricking 2020 while the air is filling up with AI smoke in the silence of nonexistent fire alarms.

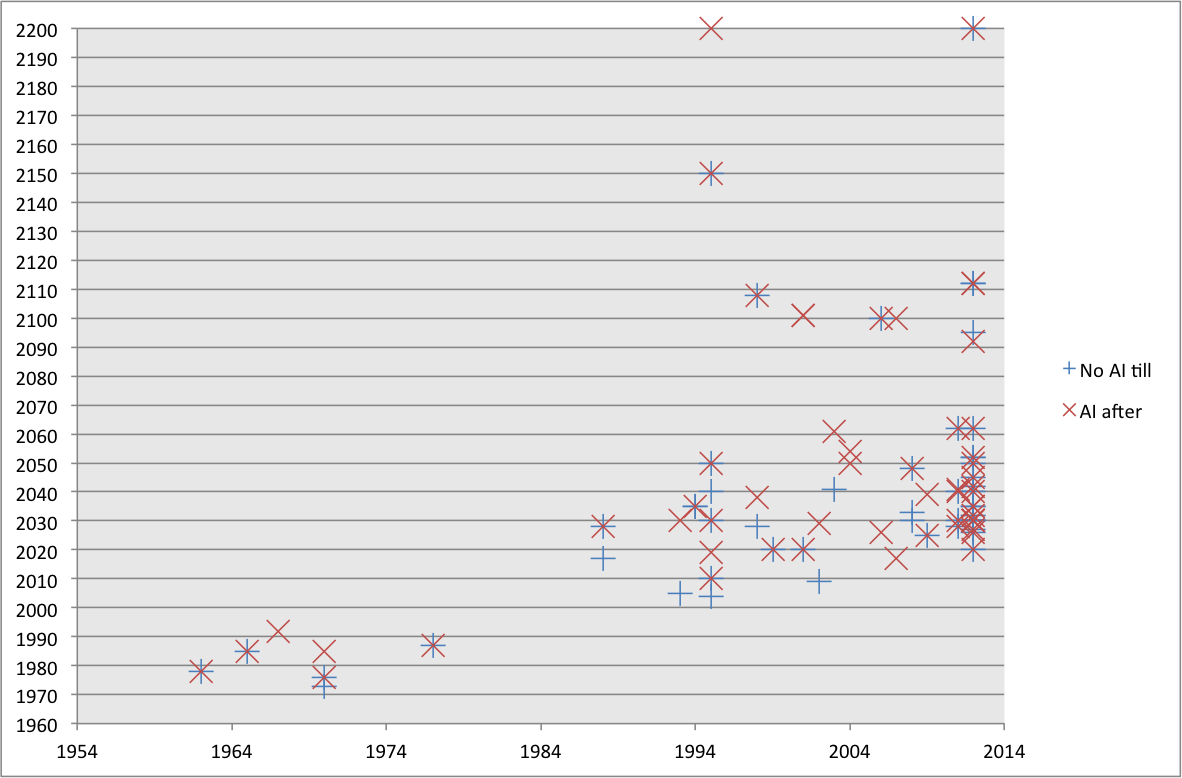

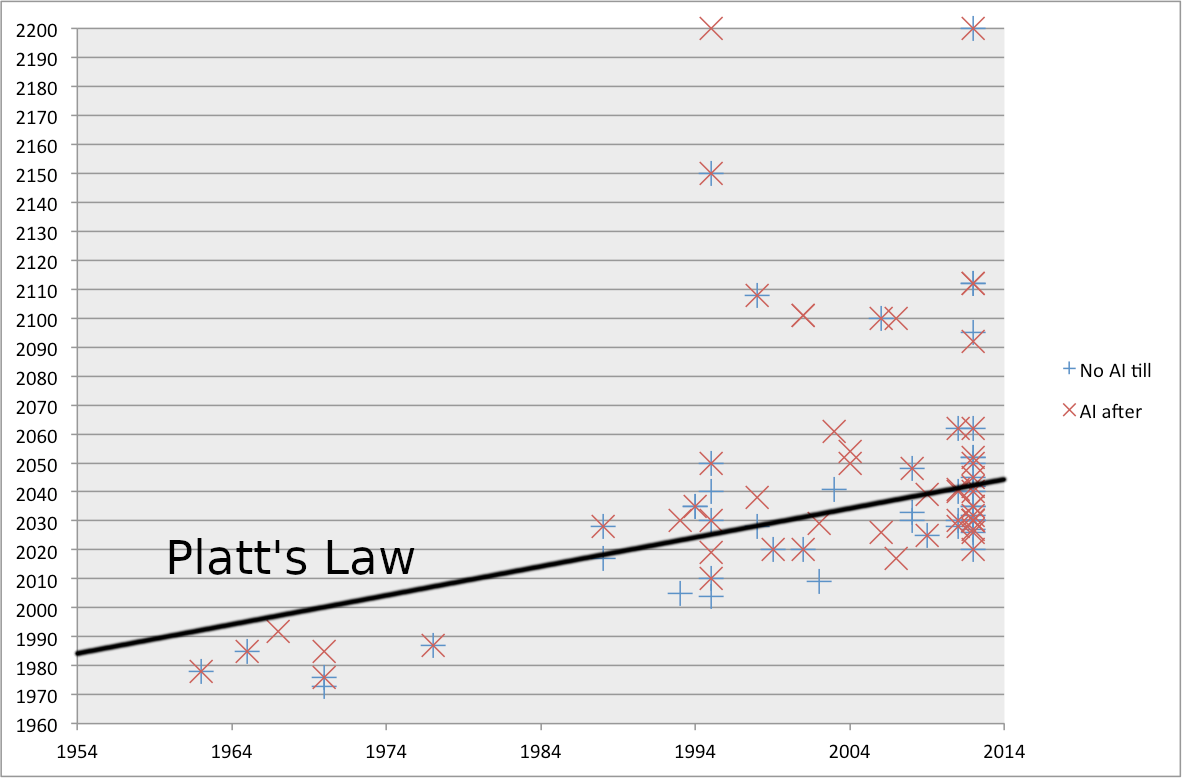

Didn't AI Impacts look into this a while back? See e.g. this dataset. Below is one of the graphs:

It may help to visualize this graph with the line for Platt's Law drawn in.

Overall I find the law to be pretty much empirically validated, at least by the standards I'd expect from a half in jest Law of Prediction.

(This post was partly written as a follow-up to Eliezer's conversations with Paul and Ajeya, so I've inserted it into the conversations sequence.)

It does fit well there, but I think it was more inspired by the person I met who thought I was being way too arrogant by not updating in the direction of OpenPhil's timeline estimates to the extent I was uncertain.

For reference, here is a 2004 post by Moravec, that’s helpfully short, containing his account of his own predictions: https://www.frc.ri.cmu.edu/~hpm/project.archive/robot.papers/2004/Predictions.html

I consider naming particular years to be a cognitively harmful sort of activity; I have refrained from trying to translate my brain's native intuitions about this into probabilities, for fear that my verbalized probabilities will be stupider than my intuitions if I try to put weight on them. What feelings I do have, I worry may be unwise to voice; AGI timelines, in my own experience, are not great for one's mental health, and I worry that other people seem to have weaker immune systems than even my own.

The following metaphor helped me to understand the Eliezer's point:

Imagine you're forced to play the game of Russian roulette with the following rules:

How should you allocate your resources between the following two options?

It is clear that in this game, the option A is almost useless.

(but not entirely useless, as your escape plans might depend on the timeline).

Which brings me to the second line of very obvious-seeming reasoning that converges upon the same conclusion - that it is in principle possible to build an AGI much more computationally efficient than a human brain - namely that biology is simply not that efficient, and especially when it comes to huge complicated things that it has started doing relatively recently.

Biological cells are computers which must copy bits to copy DNA. So we can ask biology - how much energy do cells use to copy each base pair? Seems they use just 4 ATP per base pair, or 1 ATP/bit, and thus within an OOM of the 'Landauer bound'. Which is more impressive if you consider that the typically quoted 'Landauer bound' of kT ln 2 is overly optimistic as it only applies when the error probability is 50% or the computation takes infinity. Useful computation requires at least somewhat higher speed than inf and reliability higher than none.

...Brains have to pump thousands of ions in and out of each stretch of axon and dendrite, in order to restore their ability to fire another fast neural spike. The result is that the brain's computation is something like half a million times less ef

Imprecisely multiplying two analog numbers should not require 10^5 times the minimum bit energy in a well-designed computer.

A well-designed computer would also use, say, optical interconnects that worked by pushing one or two photons around at the speed of light. So if neurons are in some sense being relatively efficient at the given task of pumping thousands upon thousands of ions in and out of a depolarizing membrane in order to transmit signals at 100m/sec -- every ion of which necessarily uses at least the Landauer minimum energy -- they are being vastly far from optimally efficient.

The moment you see ions going in and out of a depolarizing membrane, and contrast that to the possibility of firing a photon down a fiber, you ought to be done asking whether or not biology has built an optimally efficient computer. It actually isn't any more complicated than that. You are driving yourself further from sanity if you then try to do very complicated reasoning about how it must be close to the limit of efficiency to pump thousands of ions in and out of a membrane instead.

Sorry, explain again why floods of neurotransmitter molecules bopping around are ideally thermodynamically efficient? You're assuming that they're trying to do multiplication out to 8-bit precision using analog quantities? Why suppose the 8-bit precision? Even if that part was actually important, why not perhaps ding biology a few engineering points for trying to represent it using analog quantities requiring 2^16 particles bopping around? Optimally doing something incredibly inefficient is incredibly inefficient.

In general, efficiency at the level of logic gates doesn't translate into the efficiency at the CPU level.

For example, imagine you're tasked to correctly identify the faces of your classmates from 1 billion photos of random human faces. If you fail to identify a face, you must re-do the job.

Your neurons are perfectly efficient. You have a highly optimized face-recognition circuitry.

Yet you'll consume more energy on the task than, say, Apple M1 CPU:

Even if the human brain is efficient on the level of neural circuits, it is unlikely to be the most efficient vessel for a general intelligence.

In general, high-level biological designs are a crappy mess, mostly made of kludgy bugfixes to previous dirty hacks, which were made to fix other kludgy bugfixes (an example).

And the newer is the design, the crappier it is. For example, compare:

Human technology is rarely more efficient than biology along the quantitative dimensions that are important to biology, but human technology is not limited to building out of evolved wetware nanobots and can instead employ high energy manufacturing to create ultra durable materials that then enable very high energy density solutions. Our flying machines may not compete with birds in energy efficiency, but they harness power densities of a completely different scale to that available to biology. Basically the same applies to computers vs brains. AGI will outcompete human brains by brute scale, speed, and power rather than energy efficiency.

The human brain is just a scaled up primate brain, which is just a tweaked, more scalable mammal brain, but mammal brains have the same general architecture - which is closer to ~10^8 years old. It is hardly 'faulty and biased' - bias is in the mind.

A lot of the advantage of human technology is due to human technology figuring out how to use covalent bonds and metallic bonds, where biology sticks to ionic bonds and proteins held together by van der Waals forces (static cling, basically). This doesn't fit into your paradigm; it's just biology mucking around in a part of the design space easily accessible to mutation error, while humans work in a much more powerful design space because they can move around using abstract cognition.

You're missing the point!

Your arguments apply mostly toward arguing that brains are optimized for energy efficiency, but the important quantity in question is computational efficiency! You even admit that neurons are "optimizing hard for energy efficiency at the expense of speed", but don't seem to have noticed that this fact makes almost everything else you said completely irrelevant!

The point of my comment (from my perspective ) was to focus very specifically on a few claims about biology/brains that I found questionable - relevant because the OP specifically was using energy as an efficiency metric.

It's relevant because energy efficiency is one of the standard key measures of low level hardware substrate computational efficiency.

At a higher level if you are talking about overall efficiency for some complex task, well then software/algorithm efficiency is obviously super important which is a more complex subject. And there are other low level metrics of importance as well such as feature size, speed, etc.

So what did you mean by computational efficiency?

FWIW I agree that bit also rang hollow to me--my sense was also that neurons are basically as energy-efficient as you can get--but by "computational efficiency" one means something like "amount of energy expended to achieve a computational result."

For example, imagine multiplying two four-digit numbers in your head vs. in a calculator. Each transistor operation in the calculator will be much more expensive than each neuron spike, however the calculator needs many fewer transistor operations than the brain needs neuron spikes, because the calculator is optimized to efficiently compute those sorts of multiplications whereas the brain needs to expensively emulate the calculator. Overall the calculator will spend fewer joules than the brain will.

I'm especially keen to hear responses to this point:

Eliezer: Backtesting this viewpoint on the previous history of computer science, it seems to me to assert that it should be possible to:

Train a pre-Transformer RNN/CNN-based model, not using any other techniques invented after 2017, to GPT-2 levels of performance, using only around 2x as much compute as GPT-2;

Play pro-level Go using 8-16 times as much computing power as AlphaGo, but only 2006 levels of technology.

...

Your model apparently suggests that we have gotten around 50 times more efficient at turning computation into intelligence since that time; so, we should be able to replicate any modern feat of deep learning performed in 2021, using techniques from before deep learning and around fifty times as much computing power.

OpenPhil: No, that's totally not what our viewpoint says when you backfit it to past reality. Our model does a great job of retrodicting past reality.

My guess is that Ajeya / OpenPhil would say "The halving-in-costs every 2.5 years is on average, not for everything. Of course there are going to be plenty of things for which algorithmic progress has been much faster. There are also things f...

Is Humbali right that generic uncertainty about maybe being wrong, without other extra premises, should increase the entropy of one's probability distribution over AGI, thereby moving out its median further away in time?

My answer to this is that

First, no update whatsoever should take place because a probability distribution already expresses uncertainty, and there's no mechanism by which the uncertainty increased. Adele Lopez independently (and earlier) came up with the same answer.

Second, if there were an update -- say EY learned "one of the steps used in my model was wrong" -- this should indeed change the distribution. However, it should change it toward the prior distribution. It's completely unclear what the prior distribution is, but there is no rule whatsoever that says "more entropy = more prior-y" as shown by the fact that a uniform distribution over the next years has extremely high entropy yet makes a ludicrously confident prediction.

See also Information Charts (second chapter). Being under-confident/losing confidence does not have to shift your probability toward the 50% mark; it shifts it toward the prior from whoever it was before, and the prior can be lite

Going to try answering this one:

Humbali: I feel surprised that I should have to explain this to somebody who supposedly knows probability theory. If you put higher probabilities on AGI arriving in the years before 2050, then, on average, you're concentrating more probability into each year that AGI might possibly arrive, than OpenPhil does. Your probability distribution has lower entropy. We can literally just calculate out that part, if you don't believe me. So to the extent that you're wrong, it should shift your probability distributions in the direction of maximum entropy.

[Is Humbali right that generic uncertainty about maybe being wrong, without other extra premises, should increase the entropy of one's probability distribution over AGI, thereby moving out its median further away in time?]

The uncertainty must already be "priced in" your probability distribution. So your distribution and hence your median shouldn't shift at all, unless you actually observe new relevant evidence of course.

In many ways, this post is frustrating to read. It isn't straigthforward, it needlessly insults people, and it mixes irrelevant details with the key ideas.

And yet, as with many of Eliezer's post, its key points are right.

What this post does is uncover the main epistemological mistakes made by almost everyone trying their hands at figuring out timelines. Among others, there is:

The "shut up"s and "please stop"s are jarring.

Definitely not, for example, norms to espouse in argumentation (and tbf nowhere does this post claim to be a model for argument, except maybe implicitly under some circumstances).

Yet there's something to it.

There's a game of Chicken arising out of the shared responsibility to generate (counter)arguments. If Eliezer commits to Straight, ie. refuses to instantiate the core argument over and over again (either explicitly, by saying "you need to come up with the generator" or implicitly, by refusing to engage with ...

Only semi related, but I really dislike the pretend argument rhetorical format. Public arguments are mostly competitive whether you like it or not. Whatever pedagogical benefits there are to explaining ideas as fake dialogue, your "simulations" of the actual words of real people you disagree with are immensely distracting. Throughout this post my attention is constantly interrupted by how socially awkward it is to write and publish an imaginary script in which you dunk on your intellectual opponents.

Biological anchors that focus on compute make sense insofar as the arrival of AGI is mostly a function of available compute. Which is the case if the algorithm is relatively simple and compute is the main ingredient of intelligence.

Was is sensible to make that assumption in 1988? Maybe not.

Is it sensible to make that assumption today? Well, during the last ten years a class of simple algorithms have made huge strides in all kinds of tasks that were "human-only" just a few years back.

Furthermore, in many cases the performance of these algo...

Curated. Many times over the years I've seen analogies from biology used to produce estimates about AI timelines. This is the most thoroughly-argued case I've seen against them. While I believe some find the format uncomfortable, I'm personally glad to see Eliezer expressing his beliefs as he feels them, and think this is worth reading for anyone interested in predicting how AI will play out in coming years.

For those short on time, I recommend this summary by Grant Demaree.

I'm very new to Less Wrong in general, and to Eliezer's writing in particular, so I have a newbie question.

any more than you've ever argued that "we have to take AGI risk seriously even if there's only a tiny chance of it" or similar crazy things that other people hallucinate you arguing.

just like how people who helpfully try to defend MIRI by saying "Well, but even if there's a tiny chance..." are not thereby making their epistemic sins into mine.

I've read AGI Ruin: A List of Lethalities, and I legitimately have no idea what is wrong with "we have to take...

List of Lethalities isn't telling you "There's a small chance of this." It's saying, "This will kill us. We're all walking dead. I'm sorry."

For indeed in a case like this, one first backs up and asks oneself "Is Humbali right or not?" and not "How can I prove Humbali wrong?"

Gonna write up some of my thoughts here without reading on, and post them (also without reading on).

I don’t get why Humbali’s objection has not already been ‘priced in’. Eliezer has a bunch of models and info and his gut puts the timeline at before 2050. I don’t think “what if you’re mistaken about everything” isn’t an argument Eliezer already considered, so I think it’s already priced into the prediction. You’re not allowe

By the standards of “we will have a general intelligence”, Moravec is wrong, but by the standards of “computers will be able to do anything humans can do”, Moravec’s timeline seems somewhat uncontroversially prescient? For essentially any task that we can define a measurable success metric, we more or less* know how to fashion a function approximator that’s as good as or better than a human.

*I’ll freely admit that this is moving the goalposts, but there’s a slow, boring path to “AGI” where we completely automate the pipeline for “generate a function appro...

Relatedly, do you consider [function approximators for basically everything becoming better with time] to also fail to be a good predictor of AGI timelines for the same reasons that compute-based estimates fail?

Obviously yes, unless you can take the metrics on which your graphs show steady progress and really actually locate AGI on them instead of just tossing out a shot-in-the-dark biological analogy to locate AGI on them.

I have calculated the number of computer operations used by evolution to evolve the human brain - searching through organisms with increasing brain size - by adding up all the computations that were done by any brains before modern humans appeared. It comes out to 10^43 computer operations. AGI isn't coming any time soon!

And yet, because your reasoning contains the word "biological", it is just as invalid and unhelpful as Moravec's original prediction.

I agree that the conclusion about AGI not coming soon is invalid, so the following isn't exactly r...

Like, we could extrapolate out 3 OOMs of compute/$ per decade to get an upper bound: very probably AGI before 2150-ish, if Moore's law continues.

Projecting Moore's Law to continue for 130 years more is almost surely incorrect. An upper bound that is conditional on that happening seems devoid of any actual predictive power. If we approach that level of computational power prior to AGI, it will almost surely be through some other mechanism than Moore's Law, and so would be arbitrarily detached from that timeline.

(I'm taking the tack that "you might be wrong" isn't just already accounted for in your distributions, and you're now considering a generic update on "you might be wrong".)

so you're more confident about your AGI beliefs, and OpenPhil is less confident. Therefore, to the extent that you might be wrong, the world is going to look more like OpenPhil's forecasts of how the future will probably look

Informally, this is simply wrong: the specificity in OpenPhil's forecasts is some other specificity added to some hypothetical max-entropy distribution, and it ...

OK, I'll bite on EY's exercise for the reader, on refuting this "what-if":

...Humbali: Then here's one way that the minimum computational requirements for general intelligence could be higher than Moravec's argument for the human brain. Since, after, all, we only have one existence proof that general intelligence is possible at all, namely the human brain. Perhaps there's no way to get general intelligence in a computer except by simulating the brain neurotransmitter-by-neurotransmitter. In that case you'd need a lot more computing oper

Moore's Law is a phenomenon produced by human cognition and the fact that human civilization runs off human cognition. You can't expect the surface phenomenon to continue unchanged after the deep causal phenomenon underlying it starts changing. What kind of bizarre worship of graphs would lead somebody to think that the graphs were the primary phenomenon and would continue steady and unchanged when the forces underlying them changed massively?

I used to be compelled by this argument, but I've come to have more respect for the god of stright lines on graphs, even though I don't yet understand how it could possibly work like that.

My counterargument to Humbali would go like this: "Suppose I tell you I've already taken you might be wrong into account. If you ask me to do it again, then you can just do the same thing to my more uncertain estimate - I'd end up in an infinite regress, and the argument would become a statement that no matter how uncertain you are, you should be more uncertain than that. And that is ridiculous. So I'm going to take you might be wrong into account only once. Which I already have. So shut up."

Eliezer has short timelines, yet thinks that the current ML paradigm isn’t likely to be the final paradigm. Does this mean that he has some idea of a potential next paradigm? (Which he is, for obvious reasons, not talking about, but presumably expects other researchers to uncover soon, if they don’t already have an idea). Or is it that somehow the recent surprising progress within the ML paradigm (AlphaGo, AlphaFold, GPT3 etc) makes it more likely that a new paradigm that is even more algorithmically efficient is likely to emerge soon? (If the latter, I don’t see the connection).

Is Humbali right that generic uncertainty about maybe being wrong, without other extra premises, should increase the entropy of one's probability distribution over AGI, thereby moving out its median further away in time?

Writing my response in advance of reading the answer, for fun.

One thought is that this argument fails to give accurate updates to other people. Almost 100% of people would give AGI medians much further away than what I think is reasonable, and if this method wants to be a generally useful method for getting better guesses by recognizing you

My answer to the exercise, thought through before reading the remainder of the post but written down after seeing others do the same:

There is more than one direction of higher entropy to take, not necessarily towards OpenPhil's distribution. Also, entropy is relative to a measure and the default formula privileges the Lebesgue measure. Instead of calculating entropy from the probabilities for buckets 2022, 2023, 2024, ..., why not calculate it for buckets 3000-infinity, 2100-3000, 2025-2099, 2023-2024, ...?

It's very easy to construct probability distributions that have earlier timelines, that look more intuitively unconfident, and that have higher entropy than the bio-anchors forecast. You can just take some of the probability mass from the peak around 2050 and redistribute it among earlier years, especially years that are very close to the present, where bioanchors are reasonably confident that AGI is unlikely.

Spoiler tags are borked the way I'm using them.

anyway, another place to try your hand at calibration:

Humbali: No. You're expressing absolute certainty in your underlying epistemology and your entire probability distribution

no he isn't, why?

Humbali is asking for Eliezer to double count evidence. Consilience is hard if you don't do your homework on provenance of heuristic and not just naively counting up outputs who themselves also didn't do their homework.

Or in other words: "Do not cite the deep evidence to me, I was there when it was written"

And another ...

Is Humbali right that generic uncertainty about maybe being wrong, without other extra premises, should increase the entropy of one's probability distribution over AGI, thereby moving out its median further away in time?

I'll give the homework a shot.

Entropy is the amount of uncertainty inherent in your probability distribution, so generic uncertainty implies an increase in the entropy of one's probability distribution (whatever the eventual result is, it provides you more information than if would have if you were more certain beforehand). However, I

It’s worth noting that gradient descent towards maximum entropy (with respect to the Wasserstein metric and Lebesgue measure, respectively) is equivalent to the heat equation, which justifies your picture of probability mass diffusing outward. It’s also exactly right that if you put a barrier at the left end of the possibility space (i.e. ruling out the date of AGI’s arrival being earlier than the present moment), then this natural direction of increasing entropy will eventually settle into all the probability masses spreading to the right forever, so the median will also move to the right forever.

This isn’t the only way of increasing entropy, though—just a very natural one. Even if I have to keep the median fixed at 2050, by keeping fixed all the 0.5 probability mass to the left of 2050, I can still increase entropy forever by spreading out only the probability masses to the right of 2050 further towards infinity.

but even if I've made two errors in the same direction, that only shifts the estimate by 7 years or so.

Where does he say this? On page 60, I can see Moravec say:

Nevertheless, my estimates can be useful even if they are only remotely correct. Later we will see that a thousandfold error in the ratio of neurons to computations shifts the predicted arrival time of fully intelligent machines a mere 20 years.

Which seems much more reasonable than claiming 7-year precision.

I have an objection to the point about how AI models will be more efficient because they don't need to do massive parallelization:

Massive parallelization is useful for AI models too and for somewhat similar reasons. Parallel computation allows the model to spit out a result more quickly. In the biological setting, this is great because it means you can move out of the way when a tiger jumps toward you. In the ML setting, this is great because it allows the gradient to be computed more quickly. The disadvantage of parallelization is that it means that more ...

I'm not sure if this is what Eliezer was taking a swing at, but this clicked while reading and I think it's a similar underlying logic error. Apologies to those not already familiar with the argument I'm referencing:

There’s an stats argument that’s been discussed here before, the short version of which is roughly: "Half of all humans that will ever be born have probably already been born, we think that's about N people. At current birth rates we will make that many people again in X years and humanity will thus on average go extinct Soon™" (insert pr...

I don't understand the part that begins with "The last paradigm shifts were from..."

If last paradigm shifts including Stack More Layers all were towards "less knowledge, more compute", how can you draw a conclusion that "the world-ending AGI seems more likely to incorporate more knowledge and less brute force" right in the next paragraph?

I'm not necessarily disagree with the conclusion, but it seems not following from the previous reasoning.

Editing error:

wasn't there something at the beginning about how, when you're unsure, you should be careful about criticizing people who are more unsure than you?

"more unsure than you" => "more sure than you"

(Assuming I have correctly understood that this is a reference to the warning that your uncertainty is in your map, not the territory, and that sometimes other people are more sure because they actually know more than you.)

In real life, Reality goes off and does something else instead, and the Future does not look in that much detail like the futurists predicted

Half-joking - unless the futurist in question is Gerbert Wells. I think there was a quote that showed that he effectively predicted pixelization of early images along with many similar small-level details of early XXI century (although, of course, survivor bias for details probably influences my memory and the retelling I rely on).

- 1988 -

Hans Moravec: Behold my book Mind Children. Within, I project that, in 2010 or thereabouts, we shall achieve strong AI. I am not calling it "Artificial General Intelligence" because this term will not be coined for another 15 years or so.

Eliezer (who is not actually on the record as saying this, because the real Eliezer is, in this scenario, 8 years old; this version of Eliezer has all the meta-heuristics of Eliezer from 2021, but none of that Eliezer's anachronistic knowledge): Really? That sounds like a very difficult prediction to make correctly, since it is about the future, which is famously hard to predict.

Imaginary Moravec: Sounds like a fully general counterargument to me.

Eliezer: Well, it is, indeed, a fully general counterargument against futurism. Successfully predicting the unimaginably far future - that is, more than 2 or 3 years out, or sometimes less - is something that human beings seem to be quite bad at, by and large.

Moravec: I predict that, 4 years from this day, in 1992, the Sun will rise in the east.

Eliezer: Okay, let me qualify that. Humans seem to be quite bad at predicting the future whenever we need to predict anything at all new and unfamiliar, rather than the Sun continuing to rise every morning until it finally gets eaten. I'm not saying it's impossible to ever validly predict something novel! Why, even if that was impossible, how could I know it for sure? By extrapolating from my own personal inability to make predictions like that? Maybe I'm just bad at it myself. But any time somebody claims that some particular novel aspect of the far future is predictable, they justly have a significant burden of prior skepticism to overcome.

More broadly, we should not expect a good futurist to give us a generally good picture of the future. We should expect a great futurist to single out a few rare narrow aspects of the future which are, somehow, exceptions to the usual rule about the future not being very predictable.

I do agree with you, for example, that we shall at some point see Artificial General Intelligence. This seems like a rare predictable fact about the future, even though it is about a novel thing which has not happened before: we keep trying to crack this problem, we make progress albeit slowly, the problem must be solvable in principle because human brains solve it, eventually it will be solved; this is not a logical necessity, but it sure seems like the way to bet. "AGI eventually" is predictable in a way that it is not predictable that, e.g., the nation of Japan, presently upon the rise, will achieve economic dominance over the next decades - to name something else that present-day storytellers of 1988 are talking about.

But timing the novel development correctly? That is almost never done, not until things are 2 years out, and often not even then. Nuclear weapons were called, but not nuclear weapons in 1945; heavier-than-air flight was called, but not flight in 1903. In both cases, people said two years earlier that it wouldn't be done for 50 years - or said, decades too early, that it'd be done shortly. There's a difference between worrying that we may eventually get a serious global pandemic, worrying that eventually a lab accident may lead to a global pandemic, and forecasting that a global pandemic will start in November of 2019.

Moravec: You should read my book, my friend, into which I have put much effort. In particular - though it may sound impossible to forecast, to the likes of yourself - I have carefully examined a graph of computing power in single chips and the most powerful supercomputers over time. This graph looks surprisingly regular! Now, of course not all trends can continue forever; but I have considered the arguments that Moore's Law will break down, and found them unconvincing. My book spends several chapters discussing the particular reasons and technologies by which we might expect this graph to not break down, and continue, such that humanity will have, by 2010 or so, supercomputers which can perform 10 trillion operations per second.*

Oh, and also my book spends a chapter discussing the retina, the part of the brain whose computations we understand in the most detail, in order to estimate how much computing power the human brain is using, arriving at a figure of 10^13 ops/sec. This neuroscience and computer science may be a bit hard for the layperson to follow, but I assure you that I am in fact an experienced hands-on practitioner in robotics and computer vision.

So, as you can see, we should first get strong AI somewhere around 2010. I may be off by an order of magnitude in one figure or another; but even if I've made two errors in the same direction, that only shifts the estimate by 7 years or so.

(*) Moravec just about nailed this part; the actual year was 2008.

Eliezer: I sure would be amused if we did in fact get strong AI somewhere around 2010, which, for all I know at this point in this hypothetical conversation, could totally happen! Reversed stupidity is not intelligence, after all, and just because that is a completely broken justification for predicting 2010 doesn't mean that it cannot happen that way.

Moravec: Really now. Would you care to enlighten me as to how I reasoned so wrongly?

Eliezer: Among the reasons why the Future is so hard to predict, in general, is that the sort of answers we want tend to be the products of lines of causality with multiple steps and multiple inputs. Even when we can guess a single fact that plays some role in producing the Future - which is not of itself all that rare - usually the answer the storyteller wants depends on more facts than that single fact. Our ignorance of any one of those other facts can be enough to torpedo our whole line of reasoning - in practice, not just as a matter of possibilities. You could say that the art of exceptions to Futurism being impossible, consists in finding those rare things that you can predict despite being almost entirely ignorant of most concrete inputs into the concrete scenario. Like predicting that AGI will happen at some point, despite not knowing the design for it, or who will make it, or how.

My own contribution to the Moore's Law literature consists of Moore's Law of Mad Science: "Every 18 months, the minimum IQ required to destroy the Earth drops by 1 point." Even if this serious-joke was an absolutely true law, and aliens told us it was absolutely true, we'd still have no ability whatsoever to predict thereby when the Earth would be destroyed, because we'd have no idea what that minimum IQ was right now or at any future time. We would know that in general the Earth had a serious problem that needed to be addressed, because we'd know in general that destroying the Earth kept on getting easier every year; but we would not be able to time when that would become an imminent emergency, until we'd seen enough specifics that the crisis was already upon us.

In the case of your prediction about strong AI in 2010, I might put it as follows: The timing of AGI could be seen as a product of three factors, one of which you can try to extrapolate from existing graphs, and two of which you don't know at all. Ignorance of any one of them is enough to invalidate the whole prediction.

These three factors are:

Or to rephrase: Depending on how much you and your civilization know about AI-making - how much you know about cognition and computer science - it will take you a variable amount of computing power to build an AI. If you really knew what you were doing, for example, I confidently predict that you could build a mind at least as powerful as a human mind, while using fewer floating-point operations per second than a human brain is making useful use of -

Chris Humbali: Wait, did you just say "confidently"? How could you possibly know that with confidence? How can you criticize Moravec for being too confident, and then, in the next second, turn around and be confident of something yourself? Doesn't that make you a massive hypocrite?

Eliezer: Um, who are you again?

Humbali: I'm the cousin of Pat Modesto from your previous dialogue on Hero Licensing! Pat isn't here in person because "Modesto" looks unfortunately like "Moravec" on a computer screen. And also their first name looks a bit like "Paul" who is not meant to be referenced either. So today I shall be your true standard-bearer for good calibration, intellectual humility, the outside view, and reference class forecasting -

Eliezer: Two of these things are not like the other two, in my opinion; and Humbali and Modesto do not understand how to operate any of the four correctly, in my opinion; but anybody who's read "Hero Licensing" should already know I believe that.

Humbali: - and I don't see how Eliezer can possibly be so confident, after all his humble talk of the difficulty of futurism, that it's possible to build a mind 'as powerful as' a human mind using 'less computing power' than a human brain.

Eliezer: It's overdetermined by multiple lines of inference. We might first note, for example, that the human brain runs very slowly in a serial sense and tries to make up for that with massive parallelism. It's an obvious truth of computer science that while you can use 1000 serial operations per second to emulate 1000 parallel operations per second, the reverse is not in general true.

To put it another way: if you had to build a spreadsheet or a word processor on a computer running at 100Hz, you might also need a billion processing cores and massive parallelism in order to do enough cache lookups to get anything done; that wouldn't mean the computational labor you were performing was intrinsically that expensive. Since modern chips are massively serially faster than the neurons in a brain, and the direction of conversion is asymmetrical, we should expect that there are tasks which are immensely expensive to perform in a massively parallel neural setup, which are much cheaper to do with serial processing steps, and the reverse is not symmetrically true.

A sufficiently adept builder can build general intelligence more cheaply in total operations per second, if they're allowed to line up a billion operations one after another per second, versus lining up only 100 operations one after another. I don't bother to qualify this with "very probably" or "almost certainly"; it is the sort of proposition that a clear thinker should simply accept as obvious and move on.

Humbali: And is it certain that neurons can perform only 100 serial steps one after another, then? As you say, ignorance about one fact can obviate knowledge of any number of others.

Eliezer: A typical neuron firing as fast as possible can do maybe 200 spikes per second, a few rare neuron types used by eg bats to echolocate can do 1000 spikes per second, and the vast majority of neurons are not firing that fast at any given time. The usual and proverbial rule in neuroscience - the sort of academically respectable belief I'd expect you to respect even more than I do - is called "the 100-step rule", that any task a human brain (or mammalian brain) can do on perceptual timescales, must be doable with no more than 100 serial steps of computation - no more than 100 things that get computed one after another. Or even less if the computation is running off spiking frequencies instead of individual spikes.

Moravec: Yes, considerations like that are part of why I'd defend my estimate of 10^13 ops/sec for a human brain as being reasonable - more reasonable than somebody might think if they were, say, counting all the synapses and multiplying by the maximum number of spikes per second in any neuron. If you actually look at what the retina is doing, and how it's computing that, it doesn't look like it's doing one floating-point operation per activation spike per synapse.

Eliezer: There's a similar asymmetry between precise computational operations having a vastly easier time emulating noisy or imprecise computational operations, compared to the reverse - there is no doubt a way to use neurons to compute, say, exact 16-bit integer addition, which is at least more efficient than a human trying to add up 16986+11398 in their heads, but you'd still need more synapses to do that than transistors, because the synapses are noisier and the transistors can just do it precisely. This is harder to visualize and get a grasp on than the parallel-serial difference, but that doesn't make it unimportant.

Which brings me to the second line of very obvious-seeming reasoning that converges upon the same conclusion - that it is in principle possible to build an AGI much more computationally efficient than a human brain - namely that biology is simply not that efficient, and especially when it comes to huge complicated things that it has started doing relatively recently.

ATP synthase may be close to 100% thermodynamically efficient, but ATP synthase is literally over 1.5 billion years old and a core bottleneck on all biological metabolism. Brains have to pump thousands of ions in and out of each stretch of axon and dendrite, in order to restore their ability to fire another fast neural spike. The result is that the brain's computation is something like half a million times less efficient than the thermodynamic limit for its temperature - so around two millionths as efficient as ATP synthase. And neurons are a hell of a lot older than the biological software for general intelligence!

The software for a human brain is not going to be 100% efficient compared to the theoretical maximum, nor 10% efficient, nor 1% efficient, even before taking into account the whole thing with parallelism vs. serialism, precision vs. imprecision, or similarly clear low-level differences.

Humbali: Ah! But allow me to offer a consideration here that, I would wager, you've never thought of before yourself - namely - what if you're wrong? Ah, not so confident now, are you?

Eliezer: One observes, over one's cognitive life as a human, which sorts of what-ifs are useful to contemplate, and where it is wiser to spend one's limited resources planning against the alternative that one might be wrong; and I have oft observed that lots of people don't... quite seem to understand how to use 'what if' all that well? They'll be like, "Well, what if UFOs are aliens, and the aliens are partially hiding from us but not perfectly hiding from us, because they'll seem higher-status if they make themselves observable but never directly interact with us?"

I can refute individual what-ifs like that with specific counterarguments, but I'm not sure how to convey the central generator behind how I know that I ought to refute them. I am not sure how I can get people to reject these ideas for themselves, instead of them passively waiting for me to come around with a specific counterargument. My having to counterargue things specifically now seems like a road that never seems to end, and I am not as young as I once was, nor am I encouraged by how much progress I seem to be making. I refute one wacky idea with a specific counterargument, and somebody else comes along and presents a new wacky idea on almost exactly the same theme.

I know it's probably not going to work, if I try to say things like this, but I'll try to say them anyways. When you are going around saying 'what-if', there is a very great difference between your map of reality, and the territory of reality, which is extremely narrow and stable. Drop your phone, gravity pulls the phone downward, it falls. What if there are aliens and they make the phone rise into the air instead, maybe because they'll be especially amused at violating the rule after you just tried to use it as an example of where you could be confident? Imagine the aliens watching you, imagine their amusement, contemplate how fragile human thinking is and how little you can ever be assured of anything and ought not to be too confident. Then drop the phone and watch it fall. You've now learned something about how reality itself isn't made of what-ifs and reminding oneself to be humble; reality runs on rails stronger than your mind does.

Contemplating this doesn't mean you know the rails, of course, which is why it's so much harder to predict the Future than the past. But if you see that your thoughts are still wildly flailing around what-ifs, it means that they've failed to gel, in some sense, they are not yet bound to reality, because reality has no binding receptors for what-iffery.

The correct thing to do is not to act on your what-ifs that you can't figure out how to refute, but to go on looking for a model which makes narrower predictions than that. If that search fails, forge a model which puts some more numerical distribution on your highly entropic uncertainty, instead of diverting into specific what-ifs. And in the latter case, understand that this probability distribution reflects your ignorance and subjective state of mind, rather than your knowledge of an objective frequency; so that somebody else is allowed to be less ignorant without you shouting "Too confident!" at them. Reality runs on rails as strong as math; sometimes other people will achieve, before you do, the feat of having their own thoughts run through more concentrated rivers of probability, in some domain.

Now, when we are trying to concentrate our thoughts into deeper, narrower rivers that run closer to reality's rails, there is of course the legendary hazard of concentrating our thoughts into the wrong narrow channels that exclude reality. And the great legendary sign of this condition, of course, is the counterexample from Reality that falsifies our model! But you should not in general criticize somebody for trying to concentrate their probability into narrower rivers than yours, for this is the appearance of the great general project of trying to get to grips with Reality, that runs on true rails that are narrower still.

If you have concentrated your probability into different narrow channels than somebody else's, then, of course, you have a more interesting dispute; and you should engage in that legendary activity of trying to find some accessible experimental test on which your nonoverlapping models make different predictions.

Humbali: I do not understand the import of all this vaguely mystical talk.

Eliezer: I'm trying to explain why, when I say that I'm very confident it's possible to build a human-equivalent mind using less computing power than biology has managed to use effectively, and you say, "How can you be so confident, what if you are wrong," it is not unreasonable for me to reply, "Well, kid, this doesn't seem like one of those places where it's particularly important to worry about far-flung ways I could be wrong." Anyone who aspires to learn, learns over a lifetime which sorts of guesses are more likely to go oh-no-wrong in real life, and which sorts of guesses are likely to just work. Less-learned minds will have minds full of what-ifs they can't refute in more places than more-learned minds; and even if you cannot see how to refute all your what-ifs yourself, it is possible that a more-learned mind knows why they are improbable. For one must distinguish possibility from probability.

It is imaginable or conceivable that human brains have such refined algorithms that they are operating at the absolute limits of computational efficiency, or within 10% of it. But if you've spent enough time noticing where Reality usually exercises its sovereign right to yell "Gotcha!" at you, learning which of your assumptions are the kind to blow up in your face and invalidate your final conclusion, you can guess that "Ah, but what if the brain is nearly 100% computationally efficient?" is the sort of what-if that is not much worth contemplating because it is not actually going to be true in real life. Reality is going to confound you in some other way than that.

I mean, maybe you haven't read enough neuroscience and evolutionary biology that you can see from your own knowledge that the proposition sounds massively implausible and ridiculous. But it should hardly seem unlikely that somebody else, more learned in biology, might be justified in having more confidence than you. Phones don't fall up. Reality really is very stable and orderly in a lot of ways, even in places where you yourself are ignorant of that order.

But if "What if aliens are making themselves visible in flying saucers because they want high status and they'll have higher status if they're occasionally observable but never deign to talk with us?" sounds to you like it's totally plausible, and you don't see how someone can be so confident that it's not true - because oh no what if you're wrong and you haven't seen the aliens so how can you know what they're not thinking - then I'm not sure how to lead you into the place where you can dismiss that thought with confidence. It may require a kind of life experience that I don't know how to give people, at all, let alone by having them passively read paragraphs of text that I write; a learned, perceptual sense of which what-ifs have any force behind them. I mean, I can refute that specific scenario, I can put that learned sense into words; but I'm not sure that does me any good unless you learn how to refute it yourself.

Humbali: Can we leave aside all that meta stuff and get back to the object level?

Eliezer: This indeed is often wise.

Humbali: Then here's one way that the minimum computational requirements for general intelligence could be higher than Moravec's argument for the human brain. Since, after, all, we only have one existence proof that general intelligence is possible at all, namely the human brain. Perhaps there's no way to get general intelligence in a computer except by simulating the brain neurotransmitter-by-neurotransmitter. In that case you'd need a lot more computing operations per second than you'd get by calculating the number of potential spikes flowing around the brain! What if it's true? How can you know?

(Modern person: This seems like an obvious straw argument? I mean, would anybody, even at an earlier historical point, actually make an argument like -

Moravec and Eliezer: YES THEY WOULD.)

Eliezer: I can imagine that if we were trying specifically to upload a human that there'd be no easy and simple and obvious way to run the resulting simulation and get a good answer, without simulating neurotransmitter flows in extra detail.

To imagine that every one of these simulated flows is being usefully used in general intelligence and there is no way to simplify the mind design to use fewer computations... I suppose I could try to refute that specifically, but it seems to me that this is a road which has no end unless I can convey the generator of my refutations. Your what-iffery is flung far enough that, if I cannot leave even that much rejection as an exercise for the reader to do on their own without my holding their hand, the reader has little enough hope of following the rest; let them depart now, in indignation shared with you, and save themselves further outrage.

I mean, it will obviously be less obvious to the reader because they will know less than I do about this exact domain, it will justly take more work for the reader to specifically refute you than it takes me to refute you. But I think the reader needs to be able to do that at all, in this example, to follow the more difficult arguments later.

Imaginary Moravec: I don't think it changes my conclusions by an order of magnitude, but some people would worry that, for example, changes of protein expression inside a neuron in order to implement changes of long-term potentiation, are also important to intelligence, and could be a big deal in the brain's real, effectively-used computational costs. I'm curious if you'd dismiss that as well, the same way you dismiss the probability that you'd have to simulate every neurotransmitter molecule?

Eliezer: Oh, of course not. Long-term potentiation suddenly turning out to be a big deal you overlooked, compared to the depolarization impulses spiking around, is very much the sort of thing where Reality sometimes jumps out and yells "Gotcha!" at you.

Humbali: How can you tell the difference?

Eliezer: Experience with Reality yelling "Gotcha!" at myself and historical others.

Humbali: They seem like equally plausible speculations to me!

Eliezer: Really? "What if long-term potentiation is a big deal and computationally important" sounds just as plausible to you as "What if the brain is already close to the wall of making the most efficient possible use of computation to implement general intelligence, and every neurotransmitter molecule matters"?

Humbali: Yes! They're both what-ifs we can't know are false and shouldn't be overconfident about denying!

Eliezer: My tiny feeble mortal mind is far away from reality and only bound to it by the loosest of correlating interactions, but I'm not that unbound from reality.

Moravec: I would guess that in real life, long-term potentiation is sufficiently slow and local that what goes on inside the cell body of a neuron over minutes or hours is not as big of a computational deal as thousands of times that many spikes flashing around the brain in milliseconds or seconds. That's why I didn't make a big deal of it in my own estimate.

Eliezer: Sure. But it is much more the sort of thing where you wake up to a reality-authored science headline saying "Gotcha! There were tiny DNA-activation interactions going on in there at high speed, and they were actually pretty expensive and important!" I'm not saying this exact thing is very probable, just that it wouldn't be out-of-character for reality to say something like that to me, the way it would be really genuinely bizarre if Reality was, like, "Gotcha! The brain is as computationally efficient of a generally intelligent engine as any algorithm can be!"

Moravec: I think we're in agreement about that part, or we would've been, if we'd actually had this conversation in 1988. I mean, I am a competent research roboticist and it is difficult to become one if you are completely unglued from reality.

Eliezer: Then what's with the 2010 prediction for strong AI, and the massive non-sequitur leap from "the human brain is somewhere around 10 trillion ops/sec" to "if we build a 10 trillion ops/sec supercomputer, we'll get strong AI"?