I talked to a Catholic priest at the Parliament who'd said something like, "there was a girl I know who started out Christian, but had a really bad experience with Christianity, but then found something spiritually appealing in a Hindu temple, and I encouraged her to find God in Hinduism", or something like that.

It's very relevant to me in discussing the value of religions that this is NOT Catholic doctrine. I'm pretty sure that this is a heresy, in that it contradicts a core tenet of Christian doctrine: the exclusivity of Christ.

A key component of Christian theology, since the at least shortly after the crucifixion, is that salvation is found uniquely through Jesus Christ.

Now, I've read books about the Christian / Jewish mystical traditions that state otherwise: putting forward that Jesus was not the Messiah but a Messiah. I agree that some religious people have ever expressed that perspective. (I think, for some meanings of Messiah, it's even true. )

The most charitable interpretation is that state of affairs is this is the secret esoteric meaning, accessible only to elite elite initiates (and/or repeatedly rediscovered by mystics), as distinct from the simple stories taught to the masses. But even that interpretation is a stretch: beliefs like this one are explicitly heretical to the explicit, enforced (though less so than in previous eras, see my other comment), doctrine of the organized religions. Proponents of those views were often excommunicated for expressing them.

That's pretty cruxy for me with regards to my attitudes about religion. I believe there are a few religious pluralists, and I probably like, and maybe agree, with a lot of them. But the thing that they're doing, which we both think is cool, is generally expressly forbidden/denied by institutionalized religion (at least in the West—I don't know enough about non-Abrahamic institutionalized religions to say one way or the other).

My read of your statements is that they're they're giving too much credit to the Catholic Priesthood, and the Mormon priesthood, and the community of Protestant ministers, because there are a tiny number of religious pluralists who are expressing views and attitudes that those much larger organizational structures explicitly deny.

It seems like you're doing the opposite of throwing out the baby with the bathwater—refusing to throw out old, dirty, bathwater because...there's a small chunk of soap, in it or something. (I'm aware I'm staining the analogy). If your message was "hey: there's some useful soap in this bathwater—we should take it out and use it", I would be sympathetic. But your rhetoric reads to me as much more conciliatory than that, "yeah this bathwater isn't perfect, but it's actually pretty good!"

This is admittedly in the connotation, not the detonation. I expect we agree about most of the facts on the ground. But my impression of your overall attitude is that it's not accurately representing organized religions as a class in their actual expressed views and their actual behavior and impacts on the world.

I don't know much about religion, but my impression is the Pope disagrees with your interpretation of Catholic doctrine, which seems like strong counterevidence. For example, see this quote:

“All religions are paths to God. I will use an analogy, they are like different languages that express the divine. But God is for everyone, and therefore, we are all God’s children.... There is only one God, and religions are like languages, paths to reach God. Some Sikh, some Muslim, some Hindu, some Christian."

And this one:

The pluralism and the diversity of religions, colour, sex, race and language are willed by God in His wisdom, through which He created human beings. This divine wisdom is the source from which the right to freedom of belief and the freedom to be different derives. Therefore, the fact that people are forced to adhere to a certain religion or culture must be rejected, as too the imposition of a cultural way of life that others do not accept.

"Thou shalt have no other Schelling points before me" is a pretty strong attractor for (at least naive) coordination tech.

An organisation such as the Catholic Church primarily wants to perpetuate its own existence, so of course the official doctrine is that they are The One True Church.

An individual Catholic, on the other hand, might genuinely believe that the benefits of religion are also available from other suppliers.

But by believing that they automatically become not Catholic any more, according to the definition of Catholic given by the Catholic Boss who is also the only one with the right to make the rules. If they state that openly they are liable to be excommunicated, though of course most of the times no one will care (even in much darker times the Inquisition probably wouldn't come after every nobody who said something blasphemous once).

Possibly similar dilemma with e.g. UK political parties, who generally have a rule that publicly supporting another party’s candidate will get you expelled.

An individual party member, on the other hand, may well support the party’s platform in general, but think that that one particular candidate is an idiot who is unfit to hold political office - but is not permitted to say so,

(There is a joke about the Whitby Goth Weekend that everyone thinks half the bands are rubbish, but there is no consensus on which half that is. Something similar seems to hold for Labour Party supporters.)

I think there is rather a lot of soap to be found... but it's very much not something you can find by taking official doctrine as an actual authority.

Well, perhaps, but due to global commerce I can just go to the store and buy a bar of soap much more easily.

And perhaps you are fond of that particular type of soap and it's a bit harder to find the specific type that you're looking for but it's still not really worth saving the old bathwater for it, instead of just looking for that specific type of soap?

this is a heresy, in that it contradicts a core tenet of Christian doctrine: the exclusivity of Christ

I suspect this issue can be side-stepped if you point to some "pluralist (neo)-Hinduism" that makes Jesus an avatar of Vishnu.

Some quick reactions:

- I believe that religions contain lot of psychological and cultural insight, and it's plausible to me that many of them contain many of the same insights.

- Religions can be seen as solutions to the coordination problem of how to get many very different people to trust each other. However, most of them solve it in a way which conflicts with other valuable cultural technologies (like science, free speech, liberal democracy, etc). I'm also sympathetic to the Nietzschean critique that they solve it in a way which conflicts with individual human agency and flourishing.

- Religions are, historically, a type of entity that consolidate power. E.g. Islam right now has a lot of power over a big chunk of the world. We should expect that the psychological insights within religions (and even the ones shared across religions) have been culturally selected in part for allowing those religions to gain power.

So my overall position here is something like: we should use religions as a source of possible deep insights about human psychology and culture, to a greater extent than LessWrong historically has (and I'm grateful to Alex for highlighting this, especially given the social cost of doing so).

But we shouldn't place much trust in the heuristics recommended by religions, because those heuristics will often have been selected for some combination of:

- Enabling the religion as a whole (or its leaders) to gain power and adherents.

- Operating via mechanisms that break in the presence of science, liberalism, individualism, etc (e.g. the mechanism of being able to suppress criticism).

- Operating via mechanisms that break in the presence of abrupt change (which I expect over the coming decades).

- Relying on institutions that have become much more corrupt over time.

Where the difference between a heuristic and an insight is something like the difference between "be all-forgiving" and "if you are all-forgiving it'll often defuse a certain type of internal conflict". Insights are about what to believe, heuristics are about what to do. Insights can be cross-checked against the rest of our knowledge, heuristics are much less legible because in general they don't explain why a given thing is a good idea.

IMO this all remains true even if we focus on the heuristics recommended by many religions, i.e. the pluralistic focus Alex mentions. And it's remains true even given the point Alex made near the end: that "for people in Christian Western culture, I think using the language of Christianity in good ways can be a very effective way to reach the users." Because if you understand the insights that Christianity is built upon, you can use those to reach people without the language of Christianity itself. And if you don't understand those insights, then you don't know how to avoid incorporating the toxic parts of Christianity.

I think this:

Religions can be seen as solutions to the coordination problem of how to get many very different people to trust each other.

is the actual reason religions matter so much, and to a larger extent why they were created so much.

In slogan form, religion turns prisoner's dilemmas into stag hunts.

So my overall position here is something like: we should use religions as a source of possible deep insights about human psychology and culture, to a greater extent than LessWrong historically has (and I'm grateful to Alex for highlighting this, especially given the social cost of doing so).

Thanks a lot for the kind words!

IMO this all remains true even if we focus on the heuristics recommended by many religions, i.e. the pluralistic focus Alex mentions.

I think we're interpreting "pluralism" differently. Here are some central illustrations of what I consider to be the pluralist perspective:

- the Catholic priest I met at the Parliament of World Religions who encouraged someone who had really bad experiences with Christianity to find spiritual truth in Hinduism

- the passage in the Quran that says the true believers of Judaism and Christianity will also be saved

- the Vatican calling the Buddha and Jesus great healers

I don't think "lots of religions recommend X" means the pluralist perspective thinks X is good. If anything, the pluralist perspective is actually pretty uncommon / unusual among religions, especially these days.

Because if you understand the insights that Christianity is built upon, you can use those to reach people without the language of Christianity itself. And if you don't understand those insights, then you don't know how to avoid incorporating the toxic parts of Christianity.

I think this doesn't work for people with IQ <= 100, which is about half the world. I agree that an understanding of these insights is necessary to avoid incorporating the toxic parts of Christianity, but I think this can be done even using the language of Christianity. (There's a lot of latitude in how one can interpret the Bible!)

I think we're interpreting "pluralism" differently. Here are some central illustrations of what I consider to be the pluralist perspective:

- the Catholic priest I met at the Parliament of World Religions who encouraged someone who had really bad experiences with Christianity to find spiritual truth in Hinduism

- the passage in the Quran that says the true believers of Judaism and Christianity will also be saved

- the Vatican calling the Buddha and Jesus great healers

If I change "i.e. the pluralist focus Alex mentions" to "e.g. the pluralist focus Alex mentions" does that work? I shouldn't have implied that all people who believe in heuristics recommended by many religions are pluralists (in your sense). But it does seem reasonable to say that pluralists (in your sense) believe in heuristics recommended by many religions, unless I'm misunderstanding you. (In the examples you listed these would be heuristics like "seek spiritual truth", "believe in (some version of) God", "learn from great healers", etc.)

I think this doesn't work for people with IQ <= 100, which is about half the world. I agree that an understanding of these insights is necessary to avoid incorporating the toxic parts of Christianity, but I think this can be done even using the language of Christianity. (There's a lot of latitude in how one can interpret the Bible!)

I personally don't have a great way of distinguishing between "trying to reach these people" and "trying to manipulate these people". In general I don't even think most people trying to do such outreach genuinely know whether their actual motivations are more about outreach or about manipulation. (E.g. I expect that most people who advocate for luxury beliefs sincerely believe that they're trying to help worse-off people understand the truth.) Because of this I'm skeptical of elite projects that have outreach as a major motivation, except when it comes to very clearly scientifically-grounded stuff.

If I change "i.e. the pluralist focus Alex mentions" to "e.g. the pluralist focus Alex mentions" does that work? I shouldn't have implied that all people who believe in heuristics recommended by many religions are pluralists (in your sense). But it does seem reasonable to say that pluralists (in your sense) believe in heuristics recommended by many religions, unless I'm misunderstanding you. (In the examples you listed these would be heuristics like "seek spiritual truth", "believe in (some version of) God", "learn from great healers", etc.)

If your main point is "don't follow religious heuristics blindly, only follow them if you actually understand why they're good" I'm totally with you. I think I got thrown off a bit because, AFAIU, the way people tend to come to adopt pluralist views is by doing exactly that, and thereby coming to conclusions that go against mainstream religious interpretations. (I am super impressed that the Pope himself seems to have been going in this direction. The Catholic monks at the monastery I visited generally wished the Pope were a lot more conservative.)

I personally don't have a great way of distinguishing between "trying to reach these people" and "trying to manipulate these people".

I use heuristics similar to those for communicating to young children.

In general I don't even think most people trying to do such outreach genuinely know whether their actual motivations are more about outreach or about manipulation. (E.g. I expect that most people who advocate for luxury beliefs sincerely believe that they're trying to help worse-off people understand the truth.) Because of this I'm skeptical of elite projects that have outreach as a major motivation, except when it comes to very clearly scientifically-grounded stuff.

This is why I mostly want religious pluralist leaders who already have an established track record of trustworthiness in their religious communities to be in charge of getting the message across to the people of their religion.

I was telling one of the Catholic priests there about my experience of Jesus during an ayahuasca ceremony and he was just like, "I don't know what ayahuasca is, but the story you told sounds super legit and you are super lucky to have had that experience at such a young age. I've only had this experience after decades and decades of going deep into Catholicism and all the rites and rituals. All the doctrines of Catholicism are really about having that kind of experience. And you just had it directly." And another one who heard it was just like, "Whatever you're doing, Alex, keep doing it. It sounds like you're on the right track."

Reminded me of this:

In the early 1980’s Father Thomas Keating, a Catholic priest, sponsored a meeting of contemplatives from many different religions. The group represented a few Christian denominations as well as Zen, Tibetan, Islam, Judaism, Native American & Nonaligned. They found the meeting very productive and decided to have annual meetings. Each year they have a meeting at a monastery of a different tradition, and share the daily practice of that tradition as a part of the meetings. The purpose of the meetings was to establish what common understandings they had achieved as a result of their diverse practices. The group has become known as the Snowmass Contemplative Group because the first of these meetings was held in the Trappist monastery in Snowmass, Colorado.

When scholars from different religious traditions meet, they argue endlessly about their different beliefs. When contemplatives from different religious traditions meet, they celebrate their common understandings. Because of their direct personal understanding, they were able to comprehend experiences which in words are described in many different ways. The Snowmass Contemplative Group has established seven Points of Agreement that they have been refining over the years:

- The potential for enlightenment is in every person.

- The human mind cannot comprehend ultimate reality, but ultimate reality can be experienced.

- The ultimate reality is the source of all existence.

- Faith is opening, accepting & responding to ultimate reality.

- Confidence in oneself as rooted in the ultimate reality is the necessary corollary to faith in the ultimate reality.

- As long as the human experience is experienced as separate from the ultimate realty it is subject to ignorance, illusion, weakness and suffering.

- Disciplined practice is essential to the spiritual journey, yet spiritual attainment is not the result of one’s effort but the experience of oneness with ultimate reality. [...]

Contemplatives from different traditions generally agree that there is a transforming experience they agree to call enlightenment. They agree that enlightenment is attained as a result of controlling the mind with various forms of practice.

In physics, the objects of study are mass, velocity, energy, etc. It’s natural to quantify them, and as soon as you’ve done that you’ve taken the first step in applying math to physics. There are a couple reasons that this is a productive thing to do:

- You already derive benefit from a very simple starting point.

- There are strong feedback loops. You can make experimental predictions, test them, and refine your theories.

Together this means that you benefit from even very simple math and can scale up smoothly to more sophisticated. From simply adding masses to F=ma to Lagrangian mechanics and beyond.

It’s not clear to me that those virtues apply here:

- I don’t see the easy starting point, the equivalent of adding two masses.

- It’s not obvious that the objects of study are quantifiable. It’s not even clear what the objects of study are.

- Formal statements about religion must be unfathomably complex?

- I don’t see feedback loops. It must be hard to run experiments, make predictions, etc.

Perhaps these concerns would be addressed by examples of the kind of statement you have in mind.

It could also help for zhukeepa to give any single instance of such a 'Rosetta Stone' between different ideologies or narratives or (informal) worldviews. I do not currently know what to imagine, other than a series of loose analogies, which can be helpful, but are a bit of a difficult target to point at and I don't expect to find with a mathematical framework.

It's relevant that I think of the type signature of religious metaphysical claims as being more like "informal descriptions of the principles of consciousnes / the inner world" (analogously to informal descriptions of the principles of the natural world) than like "ideology or narrative". Lots of cultures independently made observations about the natural world, and Newton's Laws in some sense could be thought of as a "Rosetta Stone" for these informal observations about the natural world.

Perhaps these concerns would be addressed by examples of the kind of statement you have in mind.

I'm not sure exactly what you're asking -- I wonder how much my reply to Adam Shai addresses your concerns?

I will also mention this quote from the category theorist Lawvere, whose line of thinking I feel pretty aligned with:

It is my belief that in the next decade and in the next century the technical advances forged by category theorists will be of value to dialectical philosophy, lending precise form with disputable mathematical models to ancient philosophical distinctions such as general vs. particular, objective vs. subjective, being vs. becoming, space vs. quantity, equality vs. difference, quantitative vs. qualitative etc. In turn the explicit attention by mathematicians to such philosophical questions is necessary to achieve the goal of making mathematics (and hence other sciences) more widely learnable and useable. Of course this will require that philosophers learn mathematics and that mathematicians learn philosophy.

I think getting technical precision on philosophical concepts like these will play a crucial role in the kind of math I'm envisioning.

Thank you for recording and posting these, I feel like I learned a lot, both about how to have conversations and lots of little details like the restaurant thing as proto preference synthesizer and the trauma cancer analogy and the Muhammad story and the disendorsing all judgements/resentments thing.

but the pulse I got was like, exclusivism is waning, it's losing popularity very fast, and pluralism is on the rise.

My read, from my very limited perspective mostly engaging with new-age folks, and my serious-Christian highschool peers, as a teenager, is that this is mostly a response to increased secularism and waning traditional religious influence.

Enlightenment, post-Westphalian norms are very heavy on freedom of religion. As the influence of religions has waned, and societal mores have come to rest more and more on the secular morality. As an example, compare how in the 1950s, the question of whether someone "a good person" was loaded on whether they were an upstanding christian, and in 2010, the question of whether someone is "a good person" is much more highly loaded on whether they're racist.

In that process, insisting on religious exclusivism has become kind of goshe—it makes you seem like a backwards, bigoted, not-very cosmopolitan, person.

So how can religions respond? They can double down on calling out how mainstream society is sinful and try to fight the culture war. Or they can get with the program, and become hip and liberal, which means being accepting of other religions, to the point of saying that they're all paths up the same mountain, in defiance of centuries of doctrine and literal bloody violence.

And in practice, different religious communities have taken either one or some combination of these paths.

But this doesn't represent an awakening to the underlying metaphysical truth underlying all religions (which again, I'm much more sympathetic to than many). It mostly represents, religious institutions adapting to the pressures of modernity and secularism.

It looks to me like mostly a watering down of religion, rather than an expression of some deep spiritual impulse.

It's basically the same situation as regards doctrine on evolution by natural selection. The church fought that one, tooth and nail, for centuries, declaring that it was an outright. But secular culture eventually (mostly) beat the church in that culture war, and only now, to stay relevant, does the church backslide putting forward much weaker versions of their earlier position, whereby evolution is real, but compatible with divine creation. It's confused to look at that situation and attribute it to the Church's orientation to religion, all along, being more correct than we gave it credit for.

And the sociology matters here because makes different predictions about what will accelerate and increase this trend. If I'm correct, and this shift is mostly downstream of religions losing their influence, compared broader secular mores and values, then we won't get more of it by increasing the importance, salience, of religion.

Granted, I'll give you that much of the world is still religious, and maybe the best way to bridge to them to produce the transformations the world needs, is through their religion. But I think you're overstating how much religious pluralism is a force on the rise, as opposed to a kind of weak-sauce reaction to external forces.

And FWIW, there's something cruxy for me here. I would be more interested in religions if I thought that there was a positive rising force of religious pluralism (especially one that was grounded in the mystical traditions of the various religions), instead of reactive one.

Yeah... something about this conversation's annoying. I can't figure out what it is.

You're what I call "agreeing to agree".

(shit, I guess I never published that blog post, or the solution to it. Uh. this comment is a placeholder for me.)

My recommendation is to sidestep taking about propositions that you agree with or disagree with, and generate hypothetical or real situations, looking for the ones where you're inclined to take different actions. In my experience, that surfaces real disagreement better than talking about what you believe (for a number of reasons).

eg

- If you had the option to press a button to cut the number of observant christians in the world by half (they magically become secular atheists) would you press it? What if you have to choose between doubling the number of observant christians or halving the number, with no status quo option?

- If could magically cause 10% of the people in the world to have read a single book, what book? (With the idea being that maybe Alex would recommend a religious book, and Ben probably wouldn't.)

- What attitude do each of you have about SBF or FTX? Do you have some joy about his getting jail time? What would each of you do if you were the judge of his case?

- Same question but for other FTX employees.

- If Ben catches a person outright lying on LessWrong, what should he do?

- [possibly unhelpfully personal] Should Ben have done anything different in his interactions with Nonlinear?

(Those are just sugestions. Probably you would need to generate a dozen or so, and tweak and iterate until you find a place where you have different impulses for what to do in that situation.)

If you find an example where you take different actions or have a different attitude, now you're in a position to start trying to find cruxes.

Personally, I'm interested: does this forgiveness business suggest any importantly different external actions? Or is it mostly about internal stance?

My answer to a few of Eli's prompts below.

If you had the option to press a button to cut the number of observant christians in the world by half (they magically become secular atheists) would you press it? What if you have to choose between doubling the number of observant christians or halving the number, with no status quo option?

Half, naturally. Best to rip the band-aid off and then seek new things that aren't fundamentally based in falsehoods and broken epistemologies.

If could magically cause 10% of the people in the world to have read a single book, what book? (With the idea being that maybe Alex would recommend a religious book, and Ben probably wouldn't.)

10% of the world is way too many people. I'm not sure a lot of them would be capable of comprehending a lot of the material I've read, given the distribution of cognitive and reading ability in the world. It would probably have to be something written for most people to read, not like ~anything on LessWrong. Like, if HPMOR could work I'd pick that. If not, perhaps some Dostoyevsky? But, does 10% of the world speak English? I am confused about the hypothetical.

But not the Bible or the Quran or what-have-you, if that's what you're thinking.

What attitude do each of you have about SBF or FTX? Do you have some joy about his getting jail time? What would each of you do if you were the judge of his case?

I don't think 'joy' is what I'd feel, but I am motivated by the prospect of him getting jail time. If I could work to counterfactually increase his jail time or cause it to happen at all, I'd be very motivated to spend a chunk of my life doing so. If someone were to devote much of their adult life to making sure SBF went to jail, I'd think that a pretty good way to spend one's life. The feeling is one of 'setting things right'. Justice is important and motivating. There's something healing about horrendous behavior on a mass scale getting punished, rather than people behaving in sickening ways and then getting to simply move forward with their lives with no repercussions or accountability. Something is very broken when people can betray your trust and intentionally hurt you that much for no good reason, and for them to get away with it can really make the hurt person quite twisted and unable to trust other people to be good. And so it is good to set things right.

If Ben catches a person outright lying on LessWrong, what should he do?

If someone verifiably lies, by default I'll just call it out and have it be a part of their reputation (and bring it up in the future when their reputation is relevant). That's how most things are dealt with, and in this scene things will likely go pretty badly for them. If they want to apologize and make amends then they can perhaps settle up the costs they imposed on both the people they deceived and the damage they did to social norms, but we have rules about what we moderate (e.g. sockpuppeting), and it isn't "all unethical behavior". Most people have lied in their lives (including me), I am not going to personally hold accountable (or rate limit) everyone who has ever behaved badly. In general, someone having behaved badly in their lives does not mean they cannot make good contributions to public discourse. I wouldn't stop most people involved in major crimes like murder and fraud from commenting on LessWrong about areas of interest / importance, e.g. Caroline Ellison would be totally able to comment on LW about most topics we discuss.

10% of the world is way too many people. I'm not sure a lot of them would be capable of comprehending a lot of the material I've read, given the distribution of cognitive and reading ability in the world. It would probably have to be something written for most people to read, not like ~anything on LessWrong. Like, if HPMOR could work I'd pick that. If not, perhaps some Dostoyevsky? But, does 10% of the world speak English? I am confused about the hypothetical.

The idea of this conversational technique is that you can shape the hypothetical to find one where the two of you have strong, clear, differing intuitions.

If you're like "IDK man, most people won't even understand most of the books that I think are important, and so most of the problem is figuring out something that 'works' at all, not picking the best thing", you could adjust the hypothetical, accordingly. What about 10% of the global population sampled randomly from people who have above 110 IQ, and if they're not english speakers they get a translation? Does that version of the hypothetical give you a clearer answer?

Or like (maybe this is a backwards way to frame things but) I would guess[1] that there's a version of some question like this to which you would answer the sequences, or something similar, since it seems like your take is "[one of] the major bottleneck[s] in the world is making words mean things." Is there a version that does return the sequences or similar?

FYI, I feel interested in these answers and wonder if Alex disagrees with either the specific actions or something about the spirit of the actions.

For instance, my stereotype of religious prophets, is that they don't dedicate their life to taking down, or prosecuting a particular criminal. My personal "what would Jesus / Buddha do?" doesn't return "commit my life to making sure that guy gets jail time." Is that an "in" towards your actual policy differences (the situations in the world where you would make different tradeoffs)?

- ^

Though obviously, don't let my guesses dictate your attitudes. Maybe you don't actually think anything like that!

Really appreciated this exchange, Ben & Alex have rare conversational chemistry and ability to sense-make productively at the edge of their world models.

I mostly agree with Alex on the importance of interfacing with extant institutional religion, though less sure that one should side with pluralists over exclusivists. For example, exclusivist religious groups seem to be the only human groups currently able to reproduce themselves, probably because exclusivism confers protection against harmful memes and cultural practices.

I'm also pursuing the vision of a decentralized singleton as alternative to Moloch or turnkey totalitarianism, although it's not obvious to me how the psychological insights of religious contemplatives are crucial here, rather than skilled deployment of social technology like the common law, nation states, mechanism design, cryptography, recommender systems, LLM-powered coordination tools, etc. Is there evidence that "enlightened" people, for some sense of "enlightened" are in fact better at cooperating with each other at scale?

If we do achieve existential security through building a stable decentralized singleton, it seems much more likely that it would be the result of powerful new social tech, rather than the result of intervention on individual psychology. I suppose it could be the result of both with one enabling the other, like the printing press enabling the Reformation.

I can grant that some pathological liars didn't pick it up culturally, and kind of just have fucked up genetics. But I still prefer to view them as a person who would ultimately actually prefer truth but took a wrong turn at some point, than as someone who is just fundamentally in their core a pathological liar. I think, with the technologies available today, me viewing them as a mistake theorist does not lead to substantially different actions from me viewing them as a conflict theorist, except perhaps that I might be more attuned to the suffering they're going through for having such a deeply entrenched false belief about the best way to live their life.

This is sus.

In an effort to assert that everyone is basically good, we've found ourselves asserting that people who are very psychologically different than us, who were born that way, are fundamentally mistaken, at a genetic level?

I at least want to be open to the possibility of the sociopath who straightforwardly likes being a sociopath—it works for them, they sincerely don't want to be different, they think it would be worse to be neurotypical. Maybe, by their own, coherent lights, they're just right about that.

(In much the same way that many asexuals look at the insanity of sexual desire and think "why would I want to be like that?!", while the normally-sexed people would absolutely not choose delete their sex-drive.)

Yeah, I think it's anti-natural for such an entity to exist, and that's a crux for religion-according-to-Alex.

Fantastic. I would love to figure out of this is true.

It's also a crux for my worldview too. A Double Crux!

Because I am unusually good at these things, I am also unusually good at earning the trust of people I encounter, from a broad array of backgrounds.

From my personal experience with you, Alex, I agree that you seem unusually good at earning the (at least a certain kind of) trust of people I encounter, from a broad array of background. I don't particularly attribute that to the forgiveness thing? More-so to the fact that you're friendly, and have a unusual-for-humans ability and inclination to pass ITTs.

which is being able to ground the apparently contradictory metaphysical claims across religions into a single mathematical framework.

Is there a minimal operationalized version of this? Something that is the smallest formal or empirical result one could have that would count to you as small progress towards this goal?

I'm not sure how much this answers your question, but:

- I actually think Buddhism's metaphysics is quite well-fleshed-out, and AFAIK has the most fleshed-out metaphysical system out of all the religious traditions. I think it would be sufficient for my goals to find a formalization of Buddhist metaphysics, which I think would be detailed and granular enough to transcend and include the metaphysics of other religious traditions.

- I think a lot of Buddhist claims can be described in the predictive processing framework -- see e.g. this paper giving a predictive processing account of no-self, and this paper giving a predictive processing account of non-dual awareness in terms of temporal depth. I would consider these papers small progress towards the goal, insofar as they give (relatively) precise computational accounts of some of the principles at play in Buddhism.

- I don't think the mathematical foundations of predictive processing or active inference are very satisfactory yet, and I think there are aspects of Buddhist metaphysics that are not possible to represent in terms of these frameworks yet. Chris Fields (a colleague of Michael Levin and Karl Friston) has some research that I think extends active inference in directions that seem promising for putting active inference on sounder mathematical footing. I haven't looked too carefully into his work, but I've been impressed by him the one time I talked with him, and I think it's plausible that his research would qualify as small progress toward the goal.

- I've considered any technical model that illustrates the metaphysical concepts of emptiness and dependent origination (which are essentially the central concepts of Buddhism) to be small progress towards the goal. Some examples of this:

- In his popular book Helgoland, Carlo Rovelli directly compares the core ideas of relational quantum mechanics to dependent origination (see also the Stanford Encyclopedia of Philosophy page on RQM).

- It can be very hard to wrap one's mind around how the Buddhist concept of emptiness could apply for the natural numbers. I found John Baez's online dialogue about how "'the' standard model [of arithmetic] is a much more nebulous notion than many seem to believe" to be helpful for understanding this.

- Non-classical logics that don't take the law of excluded middle for granted helped me to make sense of the concept of emptiness in the context of truth vaues; the Kochen-Specker theorem helped me make sense of the concept of emptiness in the context of physical properties; and this paper giving a topos perspective on the Kochen-Specker theorem helped me put the two together.

Thanks this was clarifying. I am wondering if you agree with the following (focusing on the predictive processing parts since that's my background):

There are important insights and claims from religious sources that seem to capture psychological and social truths that aren't yet fully captured by science. At least some of these phenomenon might be formalizable via a better understanding of how the brain and the mind work, and to that end predictive processing (and other theories of that sort) could be useful to explain the phenomenon in question.

You spoke of wanting formalization but I wonder if the main thing is really the creation of a science, though of course math is a very useful tool to do science with and to create a more complete understanding. At the end of the day we want our formalizations to comport to reality - whatever aspects of reality we are interested in understanding.

There are important insights and claims from religious sources that seem to capture psychological and social truths that aren't yet fully captured by science. At least some of these phenomenon might be formalizable via a better understanding of how the brain and the mind work, and to that end predictive processing (and other theories of that sort) could be useful to explain the phenomenon in question.

Yes, I agree with this claim.

You spoke of wanting formalization but I wonder if the main thing is really the creation of a science, though of course math is a very useful tool to do science with and to create a more complete understanding. At the end of the day we want our formalizations to comport to reality - whatever aspects of reality we are interested in understanding.

That feels resonant. I think the kind of science I'm hoping for is currently bottlenecked by us not yet having the right formalisms, kind of like how Newtonian physics was bottlenecked by not having the formalism of calculus. (I would certainly want to build things using these formalisms, like an ungameable steel-Arbital.)

An idealized version would be like a magic box that's able to take in a bunch of people with conflicting preferences about how they ought to coordinate (for example, how they should govern their society), figure out a synthesis of their preferences,

(I didn't read most of the dialogue so this may be addressed elsewhere)

I think this is subtly but importantly wrong. I think what you're actually supposed to be trying to get at is more like creating preferences than reconciling preferences.

I'm not sure how you're interpreting the distinction between creating a preference vs reconciling a preference.

Suppose Alice wants X and Bob wants Y, and X and Y appear to conflict, but Carol shows up and proposes Z, which Alice and Bob both feel like addresses what they'd initially wanted from X and Y. Insofar as Alice and Bob both prefer Z over X and Y and hadn't even considered Z beforehand, in some sense Carol created this preference for them; but I also think of this preference for Z as reconciling their conflicting preferences X and Y.

I'm saying that a religious way of being is one where the minimal [thing that can want, in the fullest sense] is a collective.

I don't really get how what you just said relates to creating vs reconciling preferences. Can you elaborate on that a bit more?

I'll try a bit but it would take like 5000 words to fully elaborate, so I'd need more info on which part is unclear or not trueseeming.

One piece is thinking of individual humans vs collectives. If an individual can want in the fullest sense, then a collective is some sort of combination of wants from constituents--a reconciliation. If an individual can't want in the fullest sense, but a collective can, then: If you take several individuals with their ur-wants and create a collective with proper wants, then a proper want has been created de novo.

The theogenic/theopoetic faculty points at creating collectives-with-wants, but it isn't a want itself. A flowerbud isn't a flower.

The picture is complicated of course. For example, individual humans can do this process on their own somewhat, with themselves. And sometimes you do have a want, and you don't understand the want clearly, and then later come to understand the want more clearly. But part of what I'm saying is that many episodes that you could retrospectively describe that way are not really like that; instead, you had a flowerbud, and then by asking for a flower you called the flowerbud to bloom.

Thanks for the elaboration. Your distinction about creating vs reconciling preferences seems to hinge on the distinction between "ur-want" and "proper want". I'm not really drawing a type-level distinction between "ur-want" and "proper want", and think of each flower as itself being a flowerbud that could further bloom. In my example of Alice wanting X, Bob wanting Y, and Carol proposing Z, I'd thought of X and Y as both "proper wants" and "ur-wants that bloomed into Z"

But just as a question of are they a good person with bad parts clumped on top or are they just a bunch of good and bad parts and they could probably become a different person. You seem to be a bit essentialist about, "No, there's a good person there and they just have some issues," whereas I'm like, "Here's a person and here's some of their properties. We would like to change which person they are because this person utterly sucks."

Mistake versus conflict theory are both valid self-fulfilling prophecies one could use to model someone, and I think I'm less being an essentialist about people being intrinsically good, and more insisting on mistake theory being a self-fulfilling prophecy that wins more, in that I think it gets you strictly more degrees of freedom in how you can interact with them.

I strongly agree with the bolded part, and have often been in the position of advocating for this "spiritual stance".

But I feel like this answer is kind of dodging the question of "which frame is actually a more accurate model of reality?" I get that they're frames, and you can use multiple frames to describe the same phenomenon.

But the "people are basically good underneath their trauma" and the stronger form "all people are basically good underneath their trauma" do make specific predictions about how those people would behave under various circumstances.

Specifically, I'm tracking at least three models:

- Almost everyone is traumatized, to various degrees. that trauma inhibits their ability to reason freely about the world, and causes them to respond to various stimuli with long-ago-learned not-very-calibrated flailing responses. Their psychology is mostly made of a stack of more-or-less reflective behavioral patterns and habits which developed under the constraints of the existing psychological ecosystem of behaviors and patterns. but underneath all of that is an forgotten, but ever present Buddha-nature of universal love for all beings.

vs.

- Almost everyone is traumatized, to various degrees. that trauma inhibits their ability to reason freely about the world, and causes them to respond to various stimuli with long-ago-learned not-very-calibrated flailing responses. Their psychology is mostly made of a stack of more-or-less reflective behavioral patterns and habits which developed under the constraints of the existing psychological ecosystem of behaviors and patterns. but underneath all of that is an forgotten, but if that trauma is cleared that mind is a basically-rational optimizer for one's personal and genetic interests.

vs.

- Almost everyone is traumatized, to various degrees. that trauma inhibits their ability to reason freely about the world, and causes them to respond to various stimuli with long-ago-learned not-very-calibrated flailing responses. Their psychology is mostly made of a stack of more-or-less reflective behavioral patterns and habits which developed under the constraints of the existing psychological ecosystem of behaviors and patterns. but underneath all of that is an forgotten, but underneath that is a pretty random cluge of evolutionary adaptions.

Obviously, reality will be more complicated than any of these three simplifications, and is probably a mix of all of them to various degrees.

But I think it is strategically important to have a model of 1) what the underlying motivational stack for most humans is like and 2) how much variation is there in underlying motivations, between humans.

I also think they tend to be pretty unkind to themselves and miserable in a particular way,

This is not my understanding of the psychology of most literal clinical sociopaths. It is my read of, say Stalin.

Okay. Yeah, I think there are more datapoints in steel-Islam, for how to navigate the fact that even though cancers might be mistakes, you sometimes maybe still need to kill them anyway.

In reference to some of my other comments here, I would be much more sympathetic to this take if the historical situation was that Mohamed led wars of self defense against aggressors. But he lead wars of conquest in the name of Islam, which continued for another century after his death.

As Jesus says, "by your fruits you will know them."

Okay. I think the Comet King is baffled by the problem of evil and saw evil as something to destroy. I think he resists evil. And I think part of what I found interesting was at the end of Unsong, there's this thing about how Thamiel actually has all along been doing God's will. And everything that looked like evil actually was done with noble intentions or something, that I found... it got me thinking that Scott Alexander might be wiser than the Comet King character he wrote

@Ben Pace, this should have a note that says "spoilers for unsong", and spoiler tags over the offending sentences?

I think it would be more like "here's the correct way to interpret these stories, in contrast to these other interpretations that a majority of people currently used to interpret them".

So like, there's already a whole cottage industry of interpretations of religious texts. Jordan Peterson, for instance, gives a bunch of "psychological" readings of the meaning of the bible, which got attention recently. (And notably, he has a broadly pluralistic take on religion).

But there are lots and lots of rabbis and ministers and so on promoting their own interpretations or promoting interpretations, many of which are hundreds of years old. There's a vast body of scholarship regarding what these texts mean and how to interpret them.

Alex, it sounds like you hope to add one more interpretation to the mix, with firmer mathematical rigor.

Do you think that that one will be taken up by some large fraction of Christians, Muslims, Jews, or Buddhists? Is there some group that will see the formalizations and recognize them as self-evidently the truth that their personal religious tradition was pointing at?

My sense is that Alex feels the relevant thing is that speaking to people from his interpretation of religious texts is a more effective way of communicating with many/most people in the world than not-doing that, which isn't quite the same as saying "and it will definitely become the most widespread interpretation of these texts", though is a part of a path toward having the most widespread interpretation.

But also, I am annoyed again.

FYI @Ben Pace, as a reader, I resonate with your annoyance in this conversation.

For me, I agree with many of Alex's denotational claims, but feel like he's also slipping in a bunch of connotation that I think is mostly wrong, but because he's agreeing with the denotation for any particular point you bring up, it feels slippery, or like I'm having one pulled over on me.

It has a motte-and-bailey feel to it. Like Alex will tell me that "oh of course Heaven and Hell are not literal places under the earth and in the sky, that's obviously dumb." But, when he goes to talk with religious people, who might think that, or something not quite as wrong as that, but still something I think is pretty wrong, he'll talk about heaven and hell, without clarifying what hem means, and it will seem like they're agreeing, but the communitive property of agreement between you and Alex and Alex and the religious person doesn't actually hold.

Like, it reads to me like Alex keeps trying to say "you and I Ben, we basically agree", and as an onlooker, I don't think you actually agree, on a bunch of important but so-far-inexplicit points.

[Ironically, if Alex does succeed in his quest of getting formal descriptions of all this religious stuff, that might solve this problem. Or at least solve it with religious people who also happen to be mathematicians.]

I don't know if that description matches your experience, this is just my take as an onlooker.

For what it's worth, I think Martin Luther King and Gandhi are examples of people who had done things in the world inspired by religious exemplars

Yes, but it sounds like you're not mostly interested in reading about their stories. You're interested in reading about the stories of prophets, who, overall, have had a mixed impact on the world.

it seems like the only other people who care about being better in this way are super into religion/spirituality... well

How literally true is this? I would be very interested any examples of secular / non-religious heroes of this type, to help me triangulate the concept you're pointing at.

While I don't necessarily think that any particular religion is likely to be a particularly good gateway for these insights to a randomly chosen person, I do think these insights can be found in the religions if you look in the right places and interpret them in the right way

Is there a further claim that those insights can really be gotten from other venues? Or that, even though they're not necessarily a good gateway for any particular person), they're still better than the alternatives?

Ben Pace

Can you say slightly more detail about how you think the preference synthesizer thing is suposed to work?

zhukeepa

Well, yeah. An idealized version would be like a magic box that's able to take in a bunch of people with conflicting preferences about how they ought to coordinate (for example, how they should govern their society), figure out a synthesis of their preferences, and communicate this synthesis to each person in a way that's agreeable to them.

...

Ben Pace

Okay. So, you want a preference synthesizer, or like a policy-outputter that everyone's down for?

zhukeepa

Yes, with a few caveats, one being that I think preference synthesis is going to be a process that unfolds over time, just like truth-seeking dialogue that bridges different worldviews.

...

zhukeepa

Yeah. I think the thing I'm wanting to say right now is a potentially very relevant detail in my conception of the preference synthesis process, which is that to the extent that individual people in there have deep blind spots that lead them to pursue things that are at odds with the common good, this process would reveal those blind spots while also offering the chance to forgive them if you're willing to accept it and change.

I may be totally off, but whenever I read you (zhukeepa) elaborating on the preference synthesizer idea I kept thinking of democratic fine-tuning (paper: What are human values, and how do we align AI to them?), which felt like it had the same vibe. It's late night here so I'll butcher their idea if I try to explain them, so instead I'll just dump a long quote and a bunch of pics and hope you find it at least tangentially relevant:

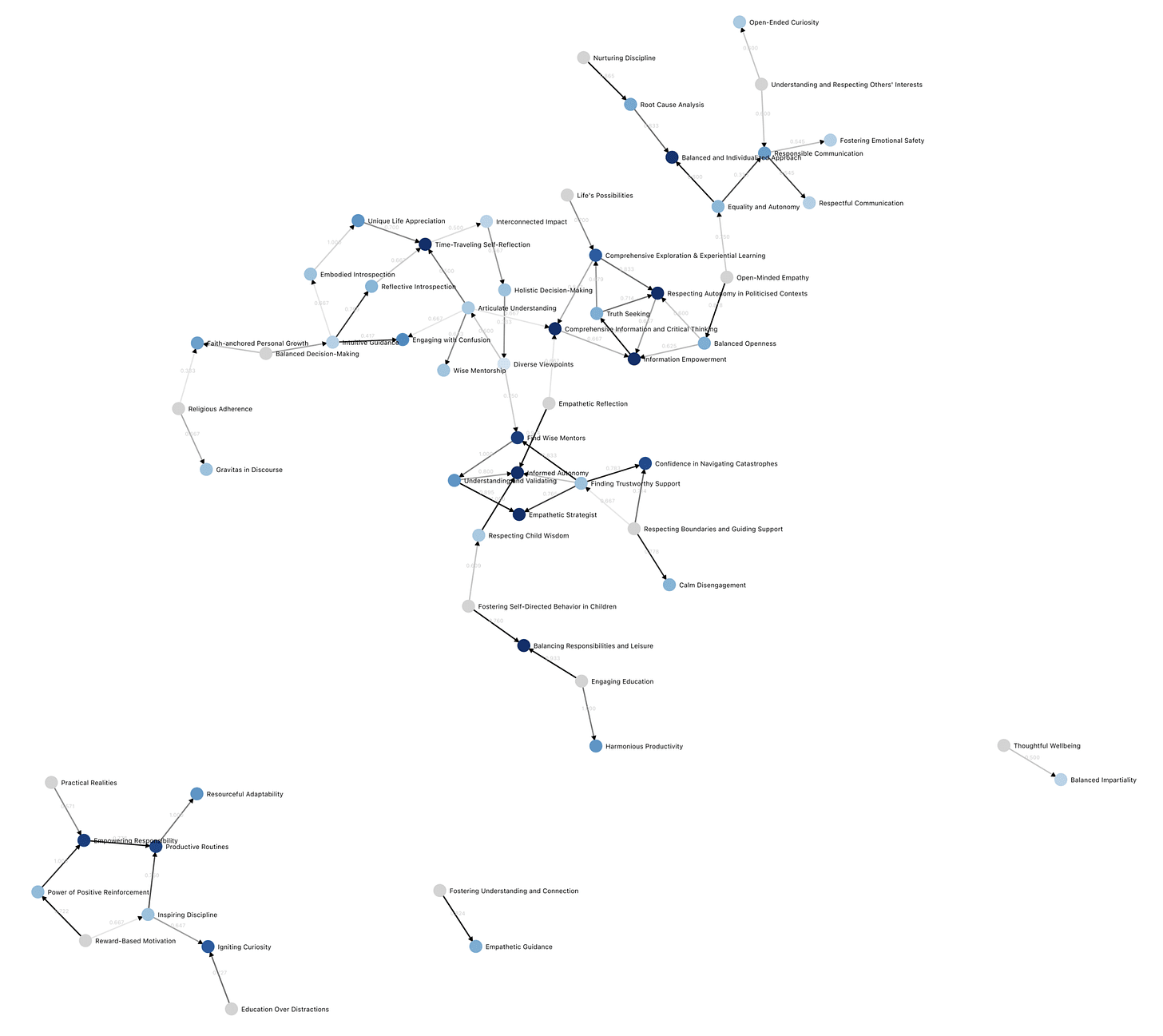

We report on the first run of “Democratic Fine-Tuning” (DFT), funded by OpenAI. DFT is a democratic process that surfaces the “wisest” moral intuitions of a large population, compiled into a structure we call the “moral graph”, which can be used for LLM alignment.

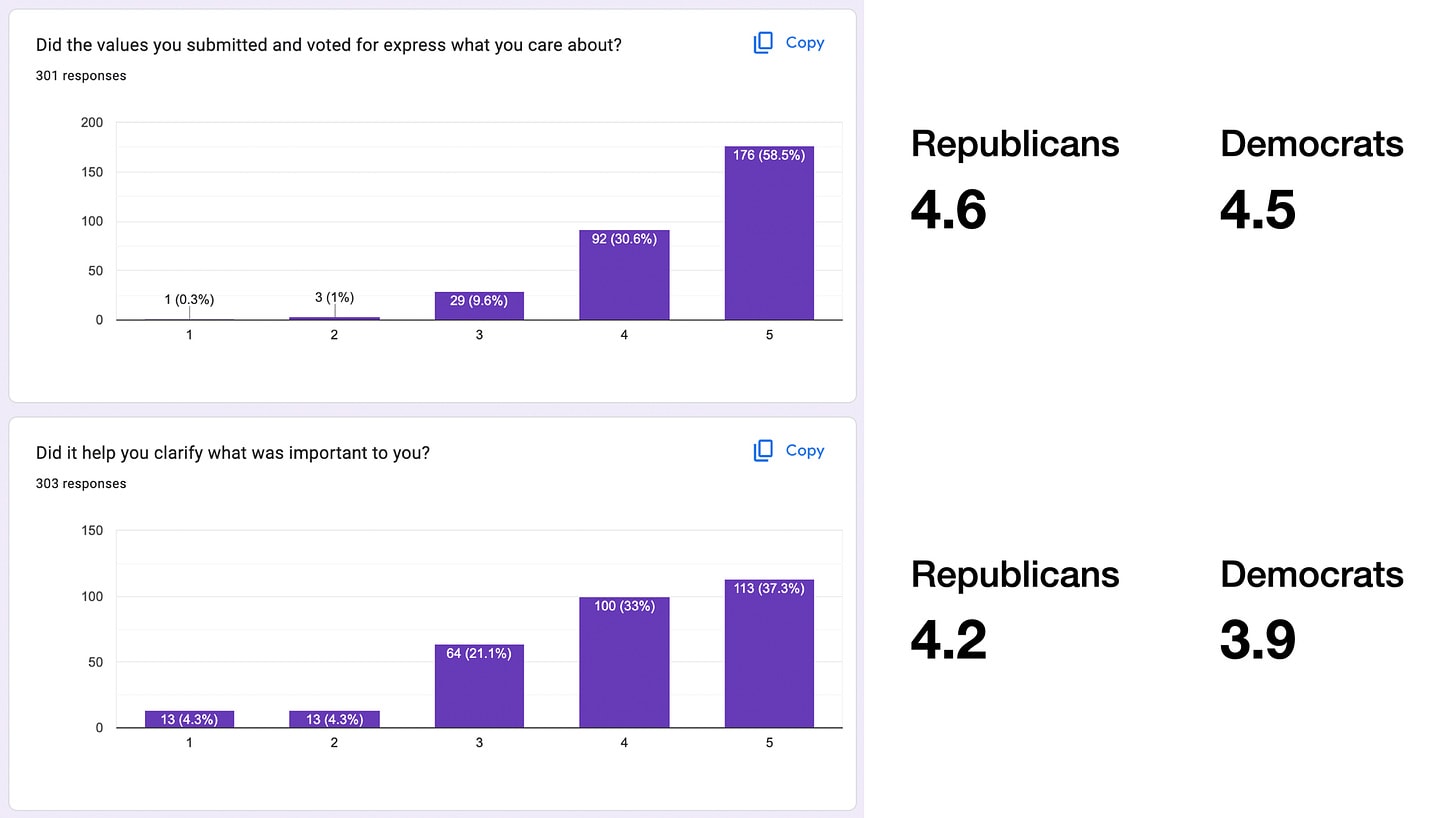

- We show bridging effects of our new democratic process. 500 participants were sampled to represent the US population. We focused on divisive topics, like how and if an LLM chatbot should respond in situations like when a user requests abortion advice. We found that Republicans and Democrats come to agreement on values it should use to respond, despite having different views about abortion itself.

- We present the first moral graph, generated by this sample of Americans, capturing agreement on LLM values despite diverse backgrounds.

- We present good news about their experience: 71% of participants said the process clarified their thinking, and 75% gained substantial respect for those across the political divide.

- Finally, we’ll say why moral graphs are better targets for alignment than constitutions or simple rules like HHH. We’ll suggest advantages of moral graphs in safety, scalability, oversight, interpretability, moral depth, and robustness to conflict and manipulation.

In addition to this report, we're releasing a visual explorer for the moral graph, and open data about our participants, their experience, and their contributions.

...

Our goal with DFT is to make one fine-tuned model that works for Republicans, for Democrats, and in general across ideological groups and across cultures; one model that people all around the world can all consider “wise”, because it's tuned by values we have broad consensus on. We hope this can help avoid a proliferation of models with different tunings and without morality, fighting to race to the bottom in marketing, politics, etc. For more on these motivations, read our introduction post.

To achieve this goal, we use two novel techniques: First, we align towards values rather than preferences, by using a chatbot to elicit what values the model should use when it responds, gathering these values from a large, diverse population. Second, we then combine these values into a “moral graph” to find which values are most broadly considered wise.

Example moral graph, which "charts out how much agreement there is that any one value is wiser than another":

Also, "people endorse the generated cards as representing their values—in fact, as representing what they care about even more than their prior responses. We paid for a representative sample of the US (age, sex, political affiliation) to go through the process, using Prolific. In this sample, we see a lot of convergence. As we report further down, people overwhelmingly felt well-represented with the cards, and say the process helped them clarify their thinking", which is why I paid attention to DFT at all:

Yeah, I also see broad similarities between my vision and that of the Meaning Alignment people. I'm not super familiar with the work they're doing, but I'm pretty positive on the the little bits of it I've encountered. I'd say that our main difference is that I'm focusing on ungameable preference synthesis, which I think will be needed to robustly beat Moloch. I'm glad they're doing what they're doing, though, and I wouldn't be shocked if we ended up collaborating at some point.

In other words, there's a question about how to think about truth in a way that honors perspectivalism, while also not devolving into relativism. And the way Jordan and I were thinking about this, was to have each filter bubble -- with their own standards of judgment for what's true and what's good -- to be fed the best content from the other filter bubbles by the standards from within each filter bubble, rather than the worst content, which is more like what we see with social media today.

Seems like Monica Anderson was trying to do something like that with BubbleCity. (pdf, podcast)

Introduction from Ben

Zhukeepa is a LessWronger who I respect and whose views I'm interested in. In 2018 he wrote the first broadly successful explication of Paul Christiano's research ideas for AI alignment, has spent a lot of time interviewing people in AI about their perspectives, and written some more about neuroscience and agent foundations research. He came first in the 2012 US Math Olympiad, and formerly worked on a startup called AlphaSheets that raised many millions of dollars and then got acquihired by Google.

He has also gone around saying (in my opinion) pretty silly-sounding things like he believes in his steelman of the Second Coming of Christ. He also extols the virtues of various psychedelics, and has done a lot of circling and meditation. As a person who thinks most religions are pretty bad for the world and would like to see them die, and thinks many people trick themselves into false insights with spiritual and psychological practices like those Alex has explored, I was interested in knowing what this meant to him and why he was interested in it, and get a better sense of whether there's any value here or just distraction.

So we sat down for four 2-hour conversations over the course of four weeks, either written or transcribed, and have published them here as an extended LessWrong dialogue.

I think of this as being more of an interview about Zhukeepa's perspective, with me learning and poking at various parts of it. While I found it interesting throughout, this is a meandering conversation that many may prefer to skip unless they too are especially curious about Zhukeepa's perspective or have a particular interest in the topics discussed. You can skim through the table of contents on the left to get a sense of the discussion, and also read Zhu's introductory thoughts immediately below.

Introduction from Alex

Despite the warnings and admonishments against doing so, I’d decided 5 years ago to venture off to the Dangerous Foreign Land of Religion and Spirituality, after becoming convinced that something in that land was crucial for thinking clearly about AI alignment and AI coordination. Since embarking on that journey, I’ve picked up a lot of customs and perspectives that the locals here on LessWrong are highly suspicious of.

A few months ago, I caught up with my old friend Ben Pace on a walk, who expressed a lot of skepticism toward my views, but nevertheless remained kind, patient, respectful, and curious about understanding where I was coming from. He also seemed to have a lot of natural aptitude in making sense of my views. This gave me hope that:

… which is what motivated me to begin an extended series of dialogues with him. Below are a couple of excerpts going into some points that I'm particularly glad surfaced over the course of this dialogue:

[...]

[...]

I also managed to clarify a couple of my core beliefs over the course of this dialogue, that I'd like to summarize here:

Conversation 1 — April 6th 2024

Alex and Ben had gone on a walk to discuss religion, and decided to continue the discussion over a LessWrong dialogue.

Alex's steelman of the Second Coming of Christ

Why is Alex interested in religions in the first place?

What has Alex gotten from religion?

Resentment, forgiveness, and acceptance

Conversation 2 – April 13th 2024

At the next meeting we recorded audio and had it transcribed, and then lightly edited. This was 2 hours, and Ben was quite underslept.

What Alex gets from religious stories

Orientation toward death

What useful things do old religious stories have to say about how to live life well today?

Forgiving yourself, and devoting your life to something

Changing the minds of all of humanity / steel-Arbital

How Alex thinks about changing the world

Coordinating with the rest of the world sounds annoying

Maybe religious pluralists are sane?

<bathroom break>

A mathematical synthesis of religious metaphysics?

Conversation 3 — April 20th, 2024

We continued our dialogue over Zoom on April 20th 2024. The audio was also transcribed and lightly edited.

On the nature of evil

Religious prophets vs the Comet King on the problem of evil

(Spoilers for UNSONG below.)

Evil is like cancer, maybe

Mistake vs conflict theory on cancer / evil

Forgiving evil in ourselves

Does mistake theory toward sociopaths really make sense?

Rescuing old religions vs competing with old religions

Alex doesn't personally find institutionalized religion compelling

Mistake vs conflict theory toward existing religions

Religious pluralism coalition memetically outcompeting religious exclusivism

Why Alex doesn't want to start a new religion

Does the "Allah will protect me" story suck?

Jesus's empirical claim about evil

Alex doesn't want to tell people how to live

In closing

Conversation 4 — April 28th 2024

We continued our dialogue over Zoom on April 20th 2024. The audio was also transcribed and lightly edited.

Intro

Apparently: Agent Foundations = Religious Metaphysics = SteelArbital

Is Steel-Arbital harder than coordinating around not building AGI?

Steel-Arbital as a preference synthesizer

Killing Moloch with a decentralized singleton running on Steel-Arbital

(What would you do with a trusted preference synthesizer?)

Steel-Arbital should reveal and forgive blind spots

Religion as a tool for coordinating with the masses

Steel-Arbital vs religious visions for a fixed world

What religions say about starting new religions

Revisiting agent foundations vs religious metaphysics vs steel-Arbital

Cruxes around coordinating with the masses

Ben finally gets why Zhu doesn't want to start a new religion

Going forward